Building an eBPF/XDP NAT-Based (Weighted) Least Connection Load Balancer from Scratch

In the previous tutorial, we built a NAT-based eBPF/XDP load balancer using hash-based backend selection. A key benefit of that setup was that both requests and responses passed through the load balancer, allowing easy bidirectional traffic inspection and deterministic, stateless load distribution.

And for short-lived connections, this approach is often good enough because many independent flows average out across backends over time.

However, with long-lived or persistent connections (e.g., HTTP keep-alive or WebSockets), even small initial imbalances can persist and accumulate, leaving some backends asymmetrically overloaded.

So although hash-based load balancing has clear benefits, it does not account for backend load factors such as the number of active connections or ongoing request volume.

While the mentioned load factor could be used in combination with the hash-based load balancing, this lab focuses solely on (weighted) least-connections implementation.

💡 All packet parsing, NAT rewriting, FIB lookup, and checksum logic remain unchanged from the NAT-based XDP load balancer lab and won't be re-explained here.

💡 This lab was built in collaboration with Sambhav Singh, an Executive Member of ACM.

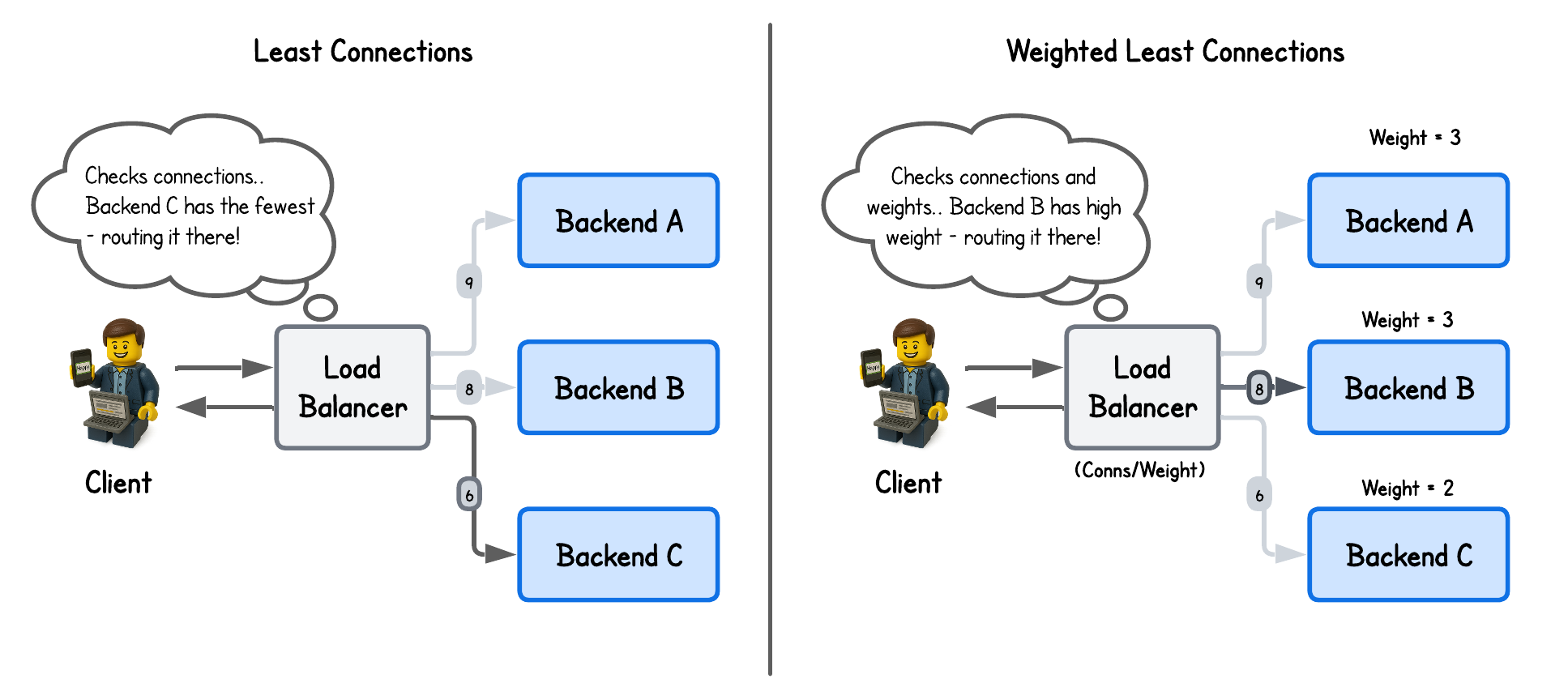

Least-Connections Load Balancing

Most common load-balancing strategies aim to distribute requests evenly across backends but ignore real-time server load.

Least-connections load balancing fixes this by routing new connections to the backend with the fewest active ones, adapting dynamically to imbalances.

To enable this strategy, each backend must track its current connection count, updating it as connections are established and terminated:

// We could also include port information but we simplify

// and assume that both LB and Backend listen on the same port for requests

struct endpoint {

__u32 ip;

};

struct backend {

// Backend endpoint information (currently only IP, but could be extended with port or other metadata)

struct endpoint endpoint;

// Number of active connections to this backend, used for least-connections load balancing algorithm

__u32 num_connections;

};

This is then stored in a BPF_MAP_TYPE_ARRAY eBPF map (where the number of backends is fixed (NUM_BACKENDS = 2) for simplicity):

struct {

__uint(type, BPF_MAP_TYPE_ARRAY);

__uint(max_entries, NUM_BACKENDS);

__type(key, __u32);

__type(value, struct backend);

} backends SEC(".maps");

After that, the backend is simply chosen in our eBPF/XDP program using the least connections algorithm:

...

__u32 key = 0;

__u32 min_connections = (__u32) - 1; // Max value for unsigned int

struct backend *candidate_backend = NULL;

for (__u32 i = 0; i < NUM_BACKENDS; i++) {

__u32 idx = i;

struct backend *b = bpf_map_lookup_elem(&backends, &idx);

if (b && b->num_connections < min_connections) {

min_connections = b->num_connections;

key = idx;

candidate_backend = b;

}

}

...

But how do we know if a backend truly has an active connection?

Well, this is where things get interesting.

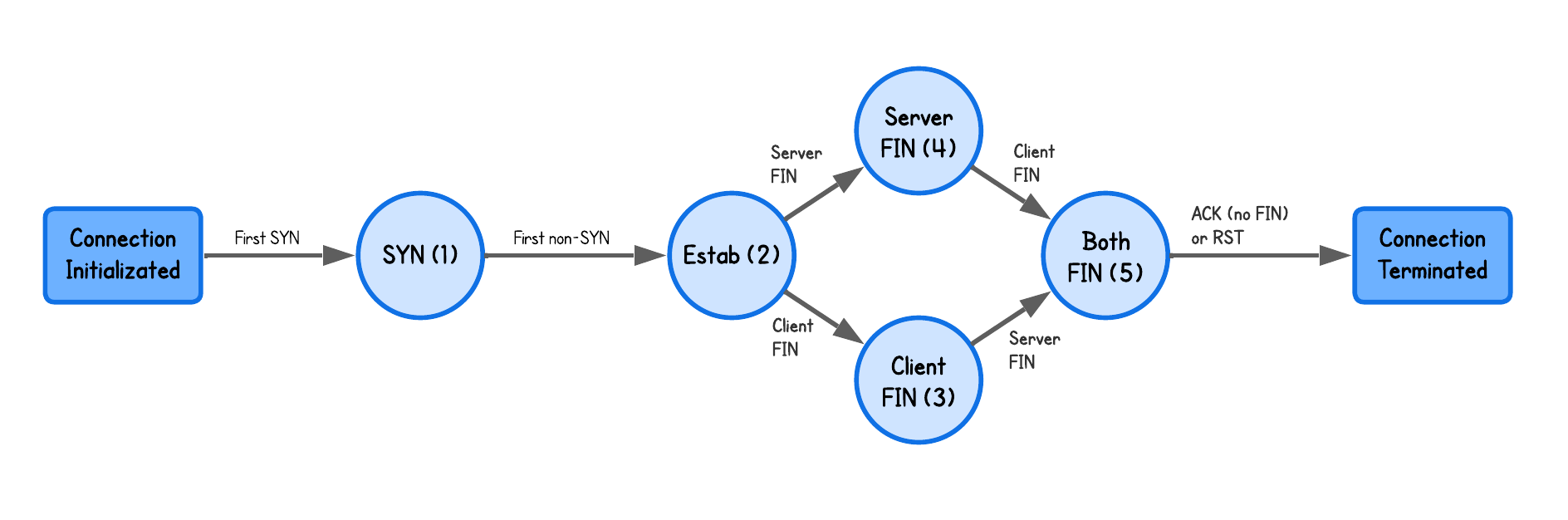

Without accounting for load balancing at all, a TCP connection between two machines is established by the three way handshake. And the TCP SYN alone does not mean a connection is established since:

- TCP SYNs packet may be retransmitted

- the server might refuse the connection using TCP RST

- Packets might corrupt/drop en route

Therefore, simply counting TCP SYN packets — or, more accurately, how many times a backend is selected — would overcount its load.

Similarly the TCP uses a four-way handshake for connection termination, not a single FIN exchange. Decrementing the number of connections upon the first FIN would break the count during retransmissions and lead to incorrect routing of subsequent traffic.

To handle this correctly, we need to maintain a per-connection state machine that tracks each TCP flow through its lifecycle.

Which when put into code, looks like this:

enum tcp_state {

TCP_STATE_UNKNOWN = 0, // Initial placeholder

TCP_STATE_SYN_SEEN = 1, // SYN seen from client, handshake not complete

TCP_STATE_ESTABLISHED = 2, // ACK seen from client, handshake complete

TCP_STATE_FIN_FROM_CLIENT = 3, // FIN seen from client

TCP_STATE_FIN_FROM_BACKEND = 4, // FIN seen from backend

TCP_STATE_FIN_BOTH = 5, // FIN seen from both sides, waiting for final ACK

};

static __always_inline void update_tcp_conn_state(struct five_tuple_t five_tuple,

struct connection *conn,

struct tcphdr *tcp,

int direction /* 0 = client->backend, 1 = backend->client */) {

switch (conn->state) {

// Should be SYN from client to backend

case TCP_STATE_UNKNOWN:

if (direction == 0 && tcp->syn) {

conn = update_state(&five_tuple, conn, TCP_STATE_SYN_SEEN);

}

return;

// Should be ACK from client to backend completing the handshake

case TCP_STATE_SYN_SEEN:

if (direction == 0 && !tcp->syn && tcp->ack) {

conn = update_state(&five_tuple, conn, TCP_STATE_ESTABLISHED);

if (!conn) return;

// Increment the connection count for the selected backend

struct backend *b = bpf_map_lookup_elem(&backends, &conn->backend_index);

if (b) {

b->num_connections++;

bpf_map_update_elem(&backends, &conn->backend_index, b, BPF_ANY);

bpf_printk("Selected backend with IP %pI4 with current number of connections equal to %d", &b->endpoint.ip, b->num_connections);

}

}

return;

// From established connection we need to see FINs from both sides

case TCP_STATE_ESTABLISHED:

case TCP_STATE_FIN_FROM_CLIENT:

case TCP_STATE_FIN_FROM_BACKEND:

if (!tcp->fin) return;

__u8 new_state;

if (direction == 0) {

new_state = (conn->state == TCP_STATE_FIN_FROM_BACKEND)

? TCP_STATE_FIN_BOTH

: TCP_STATE_FIN_FROM_CLIENT;

} else {

new_state = (conn->state == TCP_STATE_FIN_FROM_CLIENT)

? TCP_STATE_FIN_BOTH

: TCP_STATE_FIN_FROM_BACKEND;

}

conn = update_state(&five_tuple, conn, new_state);

return;

// After FIN from both sides, we wait for the final ACK or RST to clean up the connection

case TCP_STATE_FIN_BOTH:

if ((tcp->ack && !tcp->fin) || tcp->rst) {

// Decrement the connection count for the selected backend

struct backend *b = bpf_map_lookup_elem(&backends, &conn->backend_index);

if (b) {

if (b->num_connections > 0) b->num_connections--;

bpf_map_update_elem(&backends, &conn->backend_index, b, BPF_ANY);

bpf_printk("Connection closed, backend %pI4 now has %d connections", &b->endpoint.ip, b->num_connections);

}

bpf_map_delete_elem(&statetrack, &five_tuple);

}

return;

default:

return;

}

}

SEC("xdp")

int xdp_load_balancer(struct xdp_md *ctx) {

...

struct endpoint *out = bpf_map_lookup_elem(&conntrack, &in);

if (!out) {

//bpf_printk("Packet from client..");

...

// Update state for both existing and new connections on packets from clients

update_tcp_conn_state(five_tuple, conn_ptr, tcp, 0);

...

} else {

//bpf_printk("Packet from backend..");

...

// Update state on packet from backend

update_tcp_conn_state(out_loadbalancer, conn, tcp, 1);

...

}

...

}

💡 This implementation relies only on network packets like FIN, SYN, ACK and RST packets while the production load balancers also use timeouts to clean up stale connections which is omitted here for simplicity.

⚠️ If multiple clients connect at the same time, load may be unevenly distributed because the backend is chosen on the initial SYN, while connection counts update only after establishment. Addressing this would add unnecessary complexity for this lab.

But why are we tracking the TCP connections state in the same eBPF program instead of user-space or kernel tracepoints like tracepoint/sock/inet_sock_set_state?

The reason for that is that at the XDP hook, packets are processed before the packets even reach kernel TCP stack, so:

- No sockets exist yet,

- No kernel TCP state is available,

- Netfilter conntrack hasn’t run.

Unlike traditional NAT, XDP can’t query the kernel for connection status, close events, or active connection counts. Least-connections load balancing must therefore infer connection lifetime directly from packet headers.

Weighted Least-Connections Load Balancing

While least-connections load balancing spreads the load across the backends servers based on the number of connection, these backend might servers have different capacities - CPU, memory etc.

This is where weighted least-connections extends this approach by assigning more traffic to more capable nodes, proportionally to their capacity.

To implement this, we assign the weight parameter to each backend (code in the lab-wlc directory):

struct endpoint {

__u32 ip;

};

struct backend {

// Backend endpoint information (currently only IP, but could be extended with port or other metadata)

struct endpoint endpoint;

// Number of active connections to this backend, used for least-connections load balancing algorithm

__u32 num_connections;

// Backend weight for weighted load balancing algorithms

__u32 weight;

};

And to take the weight into account while selecting the backend, we compute a score that balances current connections against backend capacity (weight).

...

__u32 key = 0;

__u32 min_score = (__u32) - 1; // Max value for unsigned int

struct backend *candidate_backend = NULL;

for (__u32 i = 0; i < NUM_BACKENDS; i++) {

__u32 idx = i;

struct backend *b = bpf_map_lookup_elem(&backends, &idx);

if (b && b->weight > 0) {

// Calculate score: (connections * scale) / weight

// Higher weight reduces the score, making the backend more likely to be picked.

// We add 1 to num_connections so that weight matters even at 0 connections.

__u32 score = ((b->num_connections + 1) * 1024) / b->weight;

if (score < min_score) {

min_score = score;

key = idx;

candidate_backend = b;

}

}

}

...

💡 Since a small number of connections could be truncated to zero we multiply it by a fixed scale factor before dividing by weight (e.g., (1 * 1024) / 100 = 10 vs 1 / 100 = 0).

While we could turn this around, in this case the lower the score a backend has, the better. In other words, backends with more capacity (higher weight) have a lower score and are therefore selected more often.

The rest of the code is completely the same as in the least-connections strategy.

Running the Load Balancer

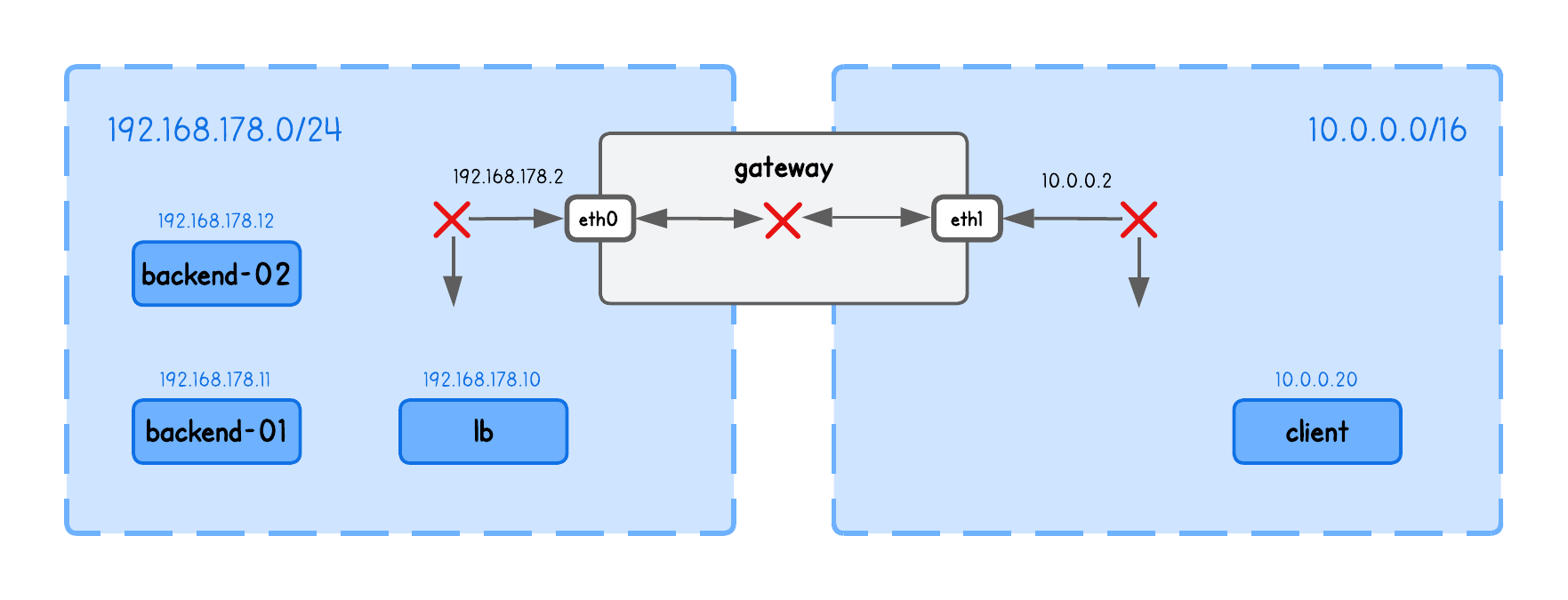

This playground has a network topology with five separate machines on two different networks:

lbin network192.168.178.10/24backend-01in network192.168.178.11/24backend-02in network192.168.178.12/24clientin network10.0.0.20/16gatewaybetween networks192.168.178.2/24and10.0.0.2/16

Before we can actually run our load balancer, we also need to enable packet forwarding between network interfaces (in the lb tab):

sudo sysctl -w net.ipv4.ip_forward=1

This allows the kernel to route packets it receives on one interface out through another — a requirement for the load balancer to forward client traffic to backend nodes.

⚠️ Make sure you've populated the ARP table with backends information using (in the lb tab):

sudo ping -c1 192.168.178.11

sudo ping -c1 192.168.178.12

See the previous section above for an explanation of why this is required.

Now build and run the XDP Load Balancer in the lb tab (inside the /lab-lc directory), using:

go generate

go build

sudo ./lb -i eth0 -backends 192.168.178.11,192.168.178.12

What are these input parameters?

iexpects the network interface on which this XDP Load Balancer program will runbackendsThe list of backend IPs in this playground (you need to provide exactly two backends due to simplification!)

Now run a simple TCP listener on each backend node (tabs backend-1, backend-2) using:

socat TCP-LISTEN:8000,reuseaddr,fork -

And query the Load Balancers node IP from the client tab, using:

for i in {1..10}; do

(echo -e "GET / HTTP/1.1\r\nHost: test\r\n\r\n"; sleep 10) | socat - TCP:192.168.178.10:8000 &

sleep 1

done

💡 This launches 10 background connections (one per second), each sending an HTTP GET and holding open for 10s to simulate long-lived clients.

Now confirm you see the requests indeed get routed through the load balancer node to one of the backends, by inspect eBPF load balancer logs (in the lb tab):

sudo bpftool prog trace

3720.727565: bpf_trace_printk: Selected backend with IP 192.168.178.11 with current number of connections equal to 1

3721.729830: bpf_trace_printk: Selected backend with IP 192.168.178.12 with current number of connections equal to 1

3722.734497: bpf_trace_printk: Selected backend with IP 192.168.178.11 with current number of connections equal to 2

3723.737485: bpf_trace_printk: Selected backend with IP 192.168.178.12 with current number of connections equal to 2

3724.737895: bpf_trace_printk: Selected backend with IP 192.168.178.11 with current number of connections equal to 3

3725.739884: bpf_trace_printk: Selected backend with IP 192.168.178.12 with current number of connections equal to 3

3726.741861: bpf_trace_printk: Selected backend with IP 192.168.178.11 with current number of connections equal to 4

3727.744064: bpf_trace_printk: Selected backend with IP 192.168.178.12 with current number of connections equal to 4

3728.745933: bpf_trace_printk: Selected backend with IP 192.168.178.11 with current number of connections equal to 5

3729.747781: bpf_trace_printk: Selected backend with IP 192.168.178.12 with current number of connections equal to 5

3731.230126: bpf_trace_printk: Connection closed, backend 192.168.178.11 now has 4 connections

3732.232937: bpf_trace_printk: Connection closed, backend 192.168.178.12 now has 4 connections

3733.237359: bpf_trace_printk: Connection closed, backend 192.168.178.11 now has 3 connections

3734.238558: bpf_trace_printk: Connection closed, backend 192.168.178.12 now has 3 connections

3735.240388: bpf_trace_printk: Connection closed, backend 192.168.178.11 now has 2 connections

3736.242546: bpf_trace_printk: Connection closed, backend 192.168.178.12 now has 2 connections

3737.244318: bpf_trace_printk: Connection closed, backend 192.168.178.11 now has 1 connections

3738.246899: bpf_trace_printk: Connection closed, backend 192.168.178.12 now has 1 connections

3739.248625: bpf_trace_printk: Connection closed, backend 192.168.178.11 now has 0 connections

3740.250418: bpf_trace_printk: Connection closed, backend 192.168.178.12 now has 0 connections

Now repeat these same steps inside the /lab-wlc directory.

You will notice how one of the backends handle more of the load due to the weight factor:

119.899821: bpf_trace_printk: Selected backend with IP 192.168.178.12 with current number of connections equal to 1

120.902187: bpf_trace_printk: Selected backend with IP 192.168.178.11 with current number of connections equal to 1

121.902570: bpf_trace_printk: Selected backend with IP 192.168.178.12 with current number of connections equal to 2

122.905369: bpf_trace_printk: Selected backend with IP 192.168.178.12 with current number of connections equal to 3

123.905964: bpf_trace_printk: Selected backend with IP 192.168.178.11 with current number of connections equal to 2

124.908674: bpf_trace_printk: Selected backend with IP 192.168.178.12 with current number of connections equal to 4

125.909043: bpf_trace_printk: Selected backend with IP 192.168.178.12 with current number of connections equal to 5

126.911915: bpf_trace_printk: Selected backend with IP 192.168.178.11 with current number of connections equal to 3

127.912275: bpf_trace_printk: Selected backend with IP 192.168.178.12 with current number of connections equal to 6

128.915038: bpf_trace_printk: Selected backend with IP 192.168.178.12 with current number of connections equal to 7

130.395485: bpf_trace_printk: Connection closed, backend 192.168.178.12 now has 6 connections

131.400242: bpf_trace_printk: Connection closed, backend 192.168.178.11 now has 2 connections

132.402566: bpf_trace_printk: Connection closed, backend 192.168.178.12 now has 5 connections

133.404611: bpf_trace_printk: Connection closed, backend 192.168.178.12 now has 4 connections

134.407611: bpf_trace_printk: Connection closed, backend 192.168.178.11 now has 1 connections

135.409336: bpf_trace_printk: Connection closed, backend 192.168.178.12 now has 3 connections

136.411862: bpf_trace_printk: Connection closed, backend 192.168.178.12 now has 2 connections

137.413832: bpf_trace_printk: Connection closed, backend 192.168.178.11 now has 0 connections

138.416346: bpf_trace_printk: Connection closed, backend 192.168.178.12 now has 1 connections

139.418130: bpf_trace_printk: Connection closed, backend 192.168.178.12 now has 0 connections

Congrats, you've came to the end of this lab 🥳

About the Author

Writes about

Frequently covers